通知设置 新通知

etree.strip_tags的用法

李魔佛 发表了文章 • 0 个评论 • 3879 次浏览 • 2019-10-24 11:24

它把参数中的标签从源htmlelement中删除,并且把里面的标签文本给合并进来。

举个例子:from lxml.html import etree

from lxml.html import fromstring, HtmlElement

test_html = '''<p><span>hello</span><span>world</span></p>'''

test_element = fromstring(test_html)

etree.strip_tags(test_element,'span') # 清除span标签

etree.tostring(test_element)

因为上述操作直接应用于test_element上的,所以test_element的值已经被修改了。

所以现在test_element 的值是

b'<p>helloworld</p>'

原创文章,转载请注明出处

http://30daydo.com/article/553

查看全部

它把参数中的标签从源htmlelement中删除,并且把里面的标签文本给合并进来。

举个例子:

from lxml.html import etree

from lxml.html import fromstring, HtmlElement

test_html = '''<p><span>hello</span><span>world</span></p>'''

test_element = fromstring(test_html)

etree.strip_tags(test_element,'span') # 清除span标签

etree.tostring(test_element)

因为上述操作直接应用于test_element上的,所以test_element的值已经被修改了。

所以现在test_element 的值是

b'<p>helloworld</p>'

原创文章,转载请注明出处

http://30daydo.com/article/553

mumu模拟器adb无法识别

李魔佛 发表了文章 • 0 个评论 • 4916 次浏览 • 2019-10-17 08:41

<Forwarding name="ADB_PORT" proto="1" hostip="127.0.0.1" hostport="7555" guestport="5555"/>

在mumu浏览器里面可以看到这个配置信息。

adb connect 127.0.0.1:7555

然后adb shell 就可以了。

配置文件名是:myandrovm_vbox86.nemu 查看全部

<Forwarding name="ADB_PORT" proto="1" hostip="127.0.0.1" hostport="7555" guestport="5555"/>

在mumu浏览器里面可以看到这个配置信息。

adb connect 127.0.0.1:7555

然后adb shell 就可以了。

配置文件名是:myandrovm_vbox86.nemu

aiohttp异步下载图片

李魔佛 发表了文章 • 0 个评论 • 4458 次浏览 • 2019-09-16 17:14

headers={'User-Agent':'Mozilla/4.0 (compatible; MSIE 5.5; Windows NT)'}

async def getPage(num):

async with aiohttp.ClientSession() as session:

async with session.get(url.format(num),headers=headers) as resp:

if resp.status==200:

f= await aiofiles.open('{}.jpg'.format(num),mode='wb')

await f.write(await resp.read())

await f.close()

loop = asyncio.get_event_loop()

tasks = [getPage(i) for i in range(5)]

loop.run_until_complete(asyncio.wait(tasks))

原创文章,

转载请注明出处:

http://30daydo.com/article/537

查看全部

url = 'http://xyhz.huizhou.gov.cn/static/js/common/jigsaw/images/{}.jpg'

headers={'User-Agent':'Mozilla/4.0 (compatible; MSIE 5.5; Windows NT)'}

async def getPage(num):

async with aiohttp.ClientSession() as session:

async with session.get(url.format(num),headers=headers) as resp:

if resp.status==200:

f= await aiofiles.open('{}.jpg'.format(num),mode='wb')

await f.write(await resp.read())

await f.close()

loop = asyncio.get_event_loop()

tasks = [getPage(i) for i in range(5)]

loop.run_until_complete(asyncio.wait(tasks))原创文章,

转载请注明出处:

http://30daydo.com/article/537

scrapy源码分析<一>:入口函数以及是如何运行

李魔佛 发表了文章 • 0 个评论 • 5517 次浏览 • 2019-08-31 10:47

下面我们从源码分析一下scrapy执行的流程:

执行scrapy crawl 命令时,调用的是Command类class Command(ScrapyCommand):

requires_project = True

def syntax(self):

return '[options]'

def short_desc(self):

return 'Runs all of the spiders - My Defined'

def run(self,args,opts):

print('==================')

print(type(self.crawler_process))

spider_list = self.crawler_process.spiders.list() # 找到爬虫类

for name in spider_list:

print('=================')

print(name)

self.crawler_process.crawl(name,**opts.__dict__)

self.crawler_process.start()

然后我们去看看crawler_process,这个是来自ScrapyCommand,而ScrapyCommand又是CrawlerProcess的子类,而CrawlerProcess又是CrawlerRunner的子类

在CrawlerRunner构造函数里面主要作用就是这个 def __init__(self, settings=None):

if isinstance(settings, dict) or settings is None:

settings = Settings(settings)

self.settings = settings

self.spider_loader = _get_spider_loader(settings) # 构造爬虫

self._crawlers = set()

self._active = set()

self.bootstrap_failed = False

1. 加载配置文件def _get_spider_loader(settings):

cls_path = settings.get('SPIDER_LOADER_CLASS')

# settings文件没有定义SPIDER_LOADER_CLASS,所以这里获取到的是系统的默认配置文件,

# 默认配置文件在接下来的代码块A

# SPIDER_LOADER_CLASS = 'scrapy.spiderloader.SpiderLoader'

loader_cls = load_object(cls_path)

# 这个函数就是根据路径转为类对象,也就是上面crapy.spiderloader.SpiderLoader 这个

# 字符串变成一个类对象

# 具体的load_object 对象代码见下面代码块B

return loader_cls.from_settings(settings.frozencopy())

默认配置文件defautl_settting.py# 代码块A

#......省略若干

SCHEDULER = 'scrapy.core.scheduler.Scheduler'

SCHEDULER_DISK_QUEUE = 'scrapy.squeues.PickleLifoDiskQueue'

SCHEDULER_MEMORY_QUEUE = 'scrapy.squeues.LifoMemoryQueue'

SCHEDULER_PRIORITY_QUEUE = 'scrapy.pqueues.ScrapyPriorityQueue'

SPIDER_LOADER_CLASS = 'scrapy.spiderloader.SpiderLoader' 就是这个值

SPIDER_LOADER_WARN_ONLY = False

SPIDER_MIDDLEWARES = {}

load_object的实现# 代码块B 为了方便,我把异常处理的去除

from importlib import import_module #导入第三方库

def load_object(path):

dot = path.rindex('.')

module, name = path[:dot], path[dot+1:]

# 上面把路径分为基本路径+模块名

mod = import_module(module)

obj = getattr(mod, name)

# 获取模块里面那个值

return obj

测试代码:In [33]: mod = import_module(module)

In [34]: mod

Out[34]: <module 'scrapy.spiderloader' from '/home/xda/anaconda3/lib/python3.7/site-packages/scrapy/spiderloader.py'>

In [35]: getattr(mod,name)

Out[35]: scrapy.spiderloader.SpiderLoader

In [36]: obj = getattr(mod,name)

In [37]: obj

Out[37]: scrapy.spiderloader.SpiderLoader

In [38]: type(obj)

Out[38]: type

在代码块A中,loader_cls是SpiderLoader,最后返回的的是SpiderLoader.from_settings(settings.frozencopy())

接下来看看SpiderLoader.from_settings, def from_settings(cls, settings):

return cls(settings)

返回类对象自己,所以直接看__init__函数即可class SpiderLoader(object):

"""

SpiderLoader is a class which locates and loads spiders

in a Scrapy project.

"""

def __init__(self, settings):

self.spider_modules = settings.getlist('SPIDER_MODULES')

# 获得settting中的模块名字,创建scrapy的时候就默认帮你生成了

# 你可以看看你的settings文件里面的内容就可以找到这个值,是一个list

self.warn_only = settings.getbool('SPIDER_LOADER_WARN_ONLY')

self._spiders = {}

self._found = defaultdict(list)

self._load_all_spiders() # 加载所有爬虫

核心就是这个_load_all_spiders:

走起:def _load_all_spiders(self):

for name in self.spider_modules:

for module in walk_modules(name): # 这个遍历文件夹里面的文件,然后再转化为类对象,

# 保存到字典:self._spiders = {}

self._load_spiders(module) # 模块变成spider

self._check_name_duplicates() # 去重,如果名字一样就异常

接下来看看_load_spiders

核心就是下面的。def iter_spider_classes(module):

from scrapy.spiders import Spider

for obj in six.itervalues(vars(module)): # 找到模块里面的变量,然后迭代出来

if inspect.isclass(obj) and \

issubclass(obj, Spider) and \

obj.__module__ == module.__name__ and \

getattr(obj, 'name', None): # 有name属性,继承于Spider

yield obj

这个obj就是我们平时写的spider类了。

原来分析了这么多,才找到了我们平时写的爬虫类

待续。。。。

原创文章

转载请注明出处

http://30daydo.com/article/530

查看全部

下面我们从源码分析一下scrapy执行的流程:

执行scrapy crawl 命令时,调用的是Command类

class Command(ScrapyCommand):

requires_project = True

def syntax(self):

return '[options]'

def short_desc(self):

return 'Runs all of the spiders - My Defined'

def run(self,args,opts):

print('==================')

print(type(self.crawler_process))

spider_list = self.crawler_process.spiders.list() # 找到爬虫类

for name in spider_list:

print('=================')

print(name)

self.crawler_process.crawl(name,**opts.__dict__)

self.crawler_process.start()

然后我们去看看crawler_process,这个是来自ScrapyCommand,而ScrapyCommand又是CrawlerProcess的子类,而CrawlerProcess又是CrawlerRunner的子类

在CrawlerRunner构造函数里面主要作用就是这个

def __init__(self, settings=None):

if isinstance(settings, dict) or settings is None:

settings = Settings(settings)

self.settings = settings

self.spider_loader = _get_spider_loader(settings) # 构造爬虫

self._crawlers = set()

self._active = set()

self.bootstrap_failed = False

1. 加载配置文件

def _get_spider_loader(settings):

cls_path = settings.get('SPIDER_LOADER_CLASS')

# settings文件没有定义SPIDER_LOADER_CLASS,所以这里获取到的是系统的默认配置文件,

# 默认配置文件在接下来的代码块A

# SPIDER_LOADER_CLASS = 'scrapy.spiderloader.SpiderLoader'

loader_cls = load_object(cls_path)

# 这个函数就是根据路径转为类对象,也就是上面crapy.spiderloader.SpiderLoader 这个

# 字符串变成一个类对象

# 具体的load_object 对象代码见下面代码块B

return loader_cls.from_settings(settings.frozencopy())

默认配置文件defautl_settting.py

# 代码块A

#......省略若干

SCHEDULER = 'scrapy.core.scheduler.Scheduler'

SCHEDULER_DISK_QUEUE = 'scrapy.squeues.PickleLifoDiskQueue'

SCHEDULER_MEMORY_QUEUE = 'scrapy.squeues.LifoMemoryQueue'

SCHEDULER_PRIORITY_QUEUE = 'scrapy.pqueues.ScrapyPriorityQueue'

SPIDER_LOADER_CLASS = 'scrapy.spiderloader.SpiderLoader' 就是这个值

SPIDER_LOADER_WARN_ONLY = False

SPIDER_MIDDLEWARES = {}

load_object的实现

# 代码块B 为了方便,我把异常处理的去除

from importlib import import_module #导入第三方库

def load_object(path):

dot = path.rindex('.')

module, name = path[:dot], path[dot+1:]

# 上面把路径分为基本路径+模块名

mod = import_module(module)

obj = getattr(mod, name)

# 获取模块里面那个值

return obj

测试代码:

In [33]: mod = import_module(module)

In [34]: mod

Out[34]: <module 'scrapy.spiderloader' from '/home/xda/anaconda3/lib/python3.7/site-packages/scrapy/spiderloader.py'>

In [35]: getattr(mod,name)

Out[35]: scrapy.spiderloader.SpiderLoader

In [36]: obj = getattr(mod,name)

In [37]: obj

Out[37]: scrapy.spiderloader.SpiderLoader

In [38]: type(obj)

Out[38]: type

在代码块A中,loader_cls是SpiderLoader,最后返回的的是SpiderLoader.from_settings(settings.frozencopy())

接下来看看SpiderLoader.from_settings,

def from_settings(cls, settings):

return cls(settings)

返回类对象自己,所以直接看__init__函数即可

class SpiderLoader(object):

"""

SpiderLoader is a class which locates and loads spiders

in a Scrapy project.

"""

def __init__(self, settings):

self.spider_modules = settings.getlist('SPIDER_MODULES')

# 获得settting中的模块名字,创建scrapy的时候就默认帮你生成了

# 你可以看看你的settings文件里面的内容就可以找到这个值,是一个list

self.warn_only = settings.getbool('SPIDER_LOADER_WARN_ONLY')

self._spiders = {}

self._found = defaultdict(list)

self._load_all_spiders() # 加载所有爬虫

核心就是这个_load_all_spiders:

走起:

def _load_all_spiders(self):

for name in self.spider_modules:

for module in walk_modules(name): # 这个遍历文件夹里面的文件,然后再转化为类对象,

# 保存到字典:self._spiders = {}

self._load_spiders(module) # 模块变成spider

self._check_name_duplicates() # 去重,如果名字一样就异常

接下来看看_load_spiders

核心就是下面的。

def iter_spider_classes(module):

from scrapy.spiders import Spider

for obj in six.itervalues(vars(module)): # 找到模块里面的变量,然后迭代出来

if inspect.isclass(obj) and \

issubclass(obj, Spider) and \

obj.__module__ == module.__name__ and \

getattr(obj, 'name', None): # 有name属性,继承于Spider

yield obj

这个obj就是我们平时写的spider类了。

原来分析了这么多,才找到了我们平时写的爬虫类

待续。。。。

原创文章

转载请注明出处

http://30daydo.com/article/530

frontera运行link_follower.py 报错:doesn't define any object named 'FIFO'

李魔佛 发表了文章 • 0 个评论 • 3300 次浏览 • 2019-07-18 11:29

from __future__ import print_function

import re

import requests

from frontera.contrib.requests.manager import RequestsFrontierManager

# from frontera.contrib.requests.manager import RequestsFrontierManager

from frontera import Settings

from six.moves.urllib.parse import urljoin

SETTINGS = Settings()

SETTINGS.BACKEND = 'frontera.contrib.backends.memory.FIFO'

# SETTINGS.BACKEND = 'frontera.contrib.backends.memory.MemoryDistributedBackend'

SETTINGS.LOGGING_MANAGER_ENABLED = True

SETTINGS.LOGGING_BACKEND_ENABLED = True

SETTINGS.MAX_REQUESTS = 100

SETTINGS.MAX_NEXT_REQUESTS = 10

SEEDS = [

'http://www.imdb.com',

]

LINK_RE = re.compile(r'<a.+?href="(.*?)".?>', re.I)

def extract_page_links(response):

return [urljoin(response.url, link) for link in LINK_RE.findall(response.text)]

if __name__ == '__main__':

frontier = RequestsFrontierManager(SETTINGS)

frontier.add_seeds([requests.Request(url=url) for url in SEEDS])

while True:

next_requests = frontier.get_next_requests()

if not next_requests:

break

for request in next_requests:

try:

response = requests.get(request.url)

links = [

requests.Request(url=url)

for url in extract_page_links(response)

]

frontier.page_crawled(response)

print('Crawled', response.url, '(found', len(links), 'urls)')

if links:

frontier.links_extracted(request, links)

except requests.RequestException as e:

error_code = type(e).__name__

frontier.request_error(request, error_code)

print('Failed to process request', request.url, 'Error:', e)

无论用的py2或者py3,都会报以下的错误。raise NameError("Module '%s' doesn't define any object named '%s'" % (module, name))

NameError: Module 'frontera.contrib.backends.memory' doesn't define any object named 'FIFO' 查看全部

from __future__ import print_function

import re

import requests

from frontera.contrib.requests.manager import RequestsFrontierManager

# from frontera.contrib.requests.manager import RequestsFrontierManager

from frontera import Settings

from six.moves.urllib.parse import urljoin

SETTINGS = Settings()

SETTINGS.BACKEND = 'frontera.contrib.backends.memory.FIFO'

# SETTINGS.BACKEND = 'frontera.contrib.backends.memory.MemoryDistributedBackend'

SETTINGS.LOGGING_MANAGER_ENABLED = True

SETTINGS.LOGGING_BACKEND_ENABLED = True

SETTINGS.MAX_REQUESTS = 100

SETTINGS.MAX_NEXT_REQUESTS = 10

SEEDS = [

'http://www.imdb.com',

]

LINK_RE = re.compile(r'<a.+?href="(.*?)".?>', re.I)

def extract_page_links(response):

return [urljoin(response.url, link) for link in LINK_RE.findall(response.text)]

if __name__ == '__main__':

frontier = RequestsFrontierManager(SETTINGS)

frontier.add_seeds([requests.Request(url=url) for url in SEEDS])

while True:

next_requests = frontier.get_next_requests()

if not next_requests:

break

for request in next_requests:

try:

response = requests.get(request.url)

links = [

requests.Request(url=url)

for url in extract_page_links(response)

]

frontier.page_crawled(response)

print('Crawled', response.url, '(found', len(links), 'urls)')

if links:

frontier.links_extracted(request, links)

except requests.RequestException as e:

error_code = type(e).__name__

frontier.request_error(request, error_code)

print('Failed to process request', request.url, 'Error:', e)

无论用的py2或者py3,都会报以下的错误。

raise NameError("Module '%s' doesn't define any object named '%s'" % (module, name))

NameError: Module 'frontera.contrib.backends.memory' doesn't define any object named 'FIFO' scrapy-rabbitmq 不支持python3 [修改源码使它支持]

李魔佛 发表了文章 • 0 个评论 • 3029 次浏览 • 2019-07-17 17:24

在python3上运行的收会报错。

需要修改以下地方:

待续。。

在python3上运行的收会报错。

需要修改以下地方:

待续。。

scrapy rabbitmq 分布式爬虫

李魔佛 发表了文章 • 0 个评论 • 5750 次浏览 • 2019-07-17 16:59

rabbitmq是个不错的消息队列服务,可以配合scrapy作为消息队列.

下面是一个简单的demo:import re

import requests

import scrapy

from scrapy import Request

from rabbit_spider import settings

from scrapy.log import logger

import json

from rabbit_spider.items import RabbitSpiderItem

import datetime

from scrapy.selector import Selector

import pika

# from scrapy_rabbitmq.spiders import RabbitMQMixin

# from scrapy.contrib.spiders import CrawlSpider

class Website(scrapy.Spider):

name = "rabbit"

def start_requests(self):

headers = {'Accept': '*/*',

'Accept-Encoding': 'gzip, deflate, br',

'Accept-Language': 'en-US,en;q=0.9,zh-CN;q=0.8,zh;q=0.7',

'Host': '36kr.com',

'Referer': 'https://36kr.com/information/web_news',

'User-Agent': 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.108 Safari/537.36'

}

url = 'https://36kr.com/information/web_news'

yield Request(url=url,

headers=headers)

def parse(self, response):

credentials = pika.PlainCredentials('admin', 'admin')

connection = pika.BlockingConnection(pika.ConnectionParameters('192.168.1.101', 5672, '/', credentials))

channel = connection.channel()

channel.exchange_declare(exchange='direct_log', exchange_type='direct')

result = channel.queue_declare(exclusive=True, queue='')

queue_name = result.method.queue

# print(queue_name)

# infos = sys.argv[1:] if len(sys.argv)>1 else ['info']

info = 'info'

# 绑定多个值

channel.queue_bind(

exchange='direct_log',

routing_key=info,

queue=queue_name

)

print('start to receive [{}]'.format(info))

channel.basic_consume(

on_message_callback=self.callback_func,

queue=queue_name,

auto_ack=True,

)

channel.start_consuming()

def callback_func(self, ch, method, properties, body):

print(body)

启动spider:from scrapy import cmdline

cmdline.execute('scrapy crawl rabbit'.split())

然后往rabbitmq里面推送数据:import pika

import settings

credentials = pika.PlainCredentials('admin','admin')

connection = pika.BlockingConnection(pika.ConnectionParameters('192.168.1.101',5672,'/',credentials))

channel = connection.channel()

channel.exchange_declare(exchange='direct_log',exchange_type='direct') # fanout 就是组播

routing_key = 'info'

message='https://36kr.com/pp/api/aggregation-entity?type=web_latest_article&b_id=59499&per_page=30'

channel.basic_publish(

exchange='direct_log',

routing_key=routing_key,

body=message

)

print('sending message {}'.format(message))

connection.close()

推送数据后,scrapy会马上接受到队里里面的数据。

注意不能在start_requests里面写等待队列的命令,因为start_requests函数需要返回一个生成器,否则程序会报错。

待续。。。

###### 2019-08-29 更新 ###################

发现一个坑,就是rabbitMQ在接受到数据后,无法在回调函数里面使用yield生成器。

查看全部

rabbitmq是个不错的消息队列服务,可以配合scrapy作为消息队列.

下面是一个简单的demo:

import re

import requests

import scrapy

from scrapy import Request

from rabbit_spider import settings

from scrapy.log import logger

import json

from rabbit_spider.items import RabbitSpiderItem

import datetime

from scrapy.selector import Selector

import pika

# from scrapy_rabbitmq.spiders import RabbitMQMixin

# from scrapy.contrib.spiders import CrawlSpider

class Website(scrapy.Spider):

name = "rabbit"

def start_requests(self):

headers = {'Accept': '*/*',

'Accept-Encoding': 'gzip, deflate, br',

'Accept-Language': 'en-US,en;q=0.9,zh-CN;q=0.8,zh;q=0.7',

'Host': '36kr.com',

'Referer': 'https://36kr.com/information/web_news',

'User-Agent': 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.108 Safari/537.36'

}

url = 'https://36kr.com/information/web_news'

yield Request(url=url,

headers=headers)

def parse(self, response):

credentials = pika.PlainCredentials('admin', 'admin')

connection = pika.BlockingConnection(pika.ConnectionParameters('192.168.1.101', 5672, '/', credentials))

channel = connection.channel()

channel.exchange_declare(exchange='direct_log', exchange_type='direct')

result = channel.queue_declare(exclusive=True, queue='')

queue_name = result.method.queue

# print(queue_name)

# infos = sys.argv[1:] if len(sys.argv)>1 else ['info']

info = 'info'

# 绑定多个值

channel.queue_bind(

exchange='direct_log',

routing_key=info,

queue=queue_name

)

print('start to receive [{}]'.format(info))

channel.basic_consume(

on_message_callback=self.callback_func,

queue=queue_name,

auto_ack=True,

)

channel.start_consuming()

def callback_func(self, ch, method, properties, body):

print(body)

启动spider:

from scrapy import cmdline

cmdline.execute('scrapy crawl rabbit'.split())

然后往rabbitmq里面推送数据:

import pika

import settings

credentials = pika.PlainCredentials('admin','admin')

connection = pika.BlockingConnection(pika.ConnectionParameters('192.168.1.101',5672,'/',credentials))

channel = connection.channel()

channel.exchange_declare(exchange='direct_log',exchange_type='direct') # fanout 就是组播

routing_key = 'info'

message='https://36kr.com/pp/api/aggregation-entity?type=web_latest_article&b_id=59499&per_page=30'

channel.basic_publish(

exchange='direct_log',

routing_key=routing_key,

body=message

)

print('sending message {}'.format(message))

connection.close()

推送数据后,scrapy会马上接受到队里里面的数据。

注意不能在start_requests里面写等待队列的命令,因为start_requests函数需要返回一个生成器,否则程序会报错。

待续。。。

###### 2019-08-29 更新 ###################

发现一个坑,就是rabbitMQ在接受到数据后,无法在回调函数里面使用yield生成器。

喜马拉雅app 爬取音频文件

李魔佛 发表了文章 • 0 个评论 • 5782 次浏览 • 2019-06-30 12:24

因为喜马拉雅的源码格式改了,所以爬虫代码也更新了一波

# -*- coding: utf-8 -*-

# website: http://30daydo.com

# @Time : 2019/6/30 12:03

# @File : main.py

import requests

import re

import os

url = 'http://180.153.255.6/mobile/v1/album/track/ts-1571294887744?albumId=23057324&device=android&isAsc=true&isQueryInvitationBrand=true&pageId={}&pageSize=20&pre_page=0'

headers = {'User-Agent': 'Xiaomi'}

def download():

for i in range(1, 3):

r = requests.get(url=url.format(i), headers=headers)

js_data = r.json()

data_list = js_data.get('data', {}).get('list', [])

for item in data_list:

trackName = item.get('title')

trackName = re.sub('[\/\\\:\*\?\"\<\>\|]', '_', trackName)

# trackName=re.sub(':','',trackName)

src_url = item.get('playUrl64')

filename = '{}.mp3'.format(trackName)

if not os.path.exists(filename):

try:

r0 = requests.get(src_url, headers=headers)

except Exception as e:

print(e)

print(trackName)

r0 = requests.get(src_url, headers=headers)

else:

with open(filename, 'wb') as f:

f.write(r0.content)

print('{} downloaded'.format(trackName))

else:

print(f'{filename}已经下载过了')

import shutil

def rename_():

for i in range(1, 3):

r = requests.get(url=url.format(i), headers=headers)

js_data = r.json()

data_list = js_data.get('data', {}).get('list', [])

for item in data_list:

trackName = item.get('title')

trackName = re.sub('[\/\\\:\*\?\"\<\>\|]', '_', trackName)

src_url = item.get('playUrl64')

orderNo=item.get('orderNo')

filename = '{}.mp3'.format(trackName)

try:

if os.path.exists(filename):

new_file='{}_{}.mp3'.format(orderNo,trackName)

shutil.move(filename,new_file)

except Exception as e:

print(e)

if __name__=='__main__':

rename_()

音频文件也更新了,详情见百度网盘。

======== 2018-10=============

爬取喜马拉雅app上 杨继东的投资之道 的音频文件

运行环境:python3# -*- coding: utf-8 -*-

# website: http://30daydo.com

# @Time : 2019/6/30 12:03

# @File : main.py

import requests

import re

url = 'https://www.ximalaya.com/revision/play/album?albumId=23057324&pageNum=1&sort=1&pageSize=60'

headers={'User-Agent':'Xiaomi'}

r = requests.get(url=url,headers=headers)

js_data = r.json()

data_list = js_data.get('data',{}).get('tracksAudioPlay',)

for item in data_list:

trackName=item.get('trackName')

trackName=re.sub(':','',trackName)

src_url = item.get('src')

try:

r0=requests.get(src_url,headers=headers)

except Exception as e:

print(e)

print(trackName)

else:

with open('{}.m4a'.format(trackName),'wb') as f:

f.write(r0.content)

print('{} downloaded'.format(trackName))

保存为main.py

然后运行 python main.py

稍微等几分钟就自动下载好了。

附下载好的音频文件:

链接:https://pan.baidu.com/s/1t_vJhTvSJSeFdI1IaDS6fA

提取码:e3zb

原创文章

转载请注明出处

http://30daydo.com/article/503 查看全部

因为喜马拉雅的源码格式改了,所以爬虫代码也更新了一波

# -*- coding: utf-8 -*-

# website: http://30daydo.com

# @Time : 2019/6/30 12:03

# @File : main.py

import requests

import re

import os

url = 'http://180.153.255.6/mobile/v1/album/track/ts-1571294887744?albumId=23057324&device=android&isAsc=true&isQueryInvitationBrand=true&pageId={}&pageSize=20&pre_page=0'

headers = {'User-Agent': 'Xiaomi'}

def download():

for i in range(1, 3):

r = requests.get(url=url.format(i), headers=headers)

js_data = r.json()

data_list = js_data.get('data', {}).get('list', [])

for item in data_list:

trackName = item.get('title')

trackName = re.sub('[\/\\\:\*\?\"\<\>\|]', '_', trackName)

# trackName=re.sub(':','',trackName)

src_url = item.get('playUrl64')

filename = '{}.mp3'.format(trackName)

if not os.path.exists(filename):

try:

r0 = requests.get(src_url, headers=headers)

except Exception as e:

print(e)

print(trackName)

r0 = requests.get(src_url, headers=headers)

else:

with open(filename, 'wb') as f:

f.write(r0.content)

print('{} downloaded'.format(trackName))

else:

print(f'{filename}已经下载过了')

import shutil

def rename_():

for i in range(1, 3):

r = requests.get(url=url.format(i), headers=headers)

js_data = r.json()

data_list = js_data.get('data', {}).get('list', [])

for item in data_list:

trackName = item.get('title')

trackName = re.sub('[\/\\\:\*\?\"\<\>\|]', '_', trackName)

src_url = item.get('playUrl64')

orderNo=item.get('orderNo')

filename = '{}.mp3'.format(trackName)

try:

if os.path.exists(filename):

new_file='{}_{}.mp3'.format(orderNo,trackName)

shutil.move(filename,new_file)

except Exception as e:

print(e)

if __name__=='__main__':

rename_()

音频文件也更新了,详情见百度网盘。

======== 2018-10=============

爬取喜马拉雅app上 杨继东的投资之道 的音频文件

运行环境:python3

# -*- coding: utf-8 -*-

# website: http://30daydo.com

# @Time : 2019/6/30 12:03

# @File : main.py

import requests

import re

url = 'https://www.ximalaya.com/revision/play/album?albumId=23057324&pageNum=1&sort=1&pageSize=60'

headers={'User-Agent':'Xiaomi'}

r = requests.get(url=url,headers=headers)

js_data = r.json()

data_list = js_data.get('data',{}).get('tracksAudioPlay',)

for item in data_list:

trackName=item.get('trackName')

trackName=re.sub(':','',trackName)

src_url = item.get('src')

try:

r0=requests.get(src_url,headers=headers)

except Exception as e:

print(e)

print(trackName)

else:

with open('{}.m4a'.format(trackName),'wb') as f:

f.write(r0.content)

print('{} downloaded'.format(trackName))

保存为main.py

然后运行 python main.py

稍微等几分钟就自动下载好了。

附下载好的音频文件:

链接:https://pan.baidu.com/s/1t_vJhTvSJSeFdI1IaDS6fA

提取码:e3zb

原创文章

转载请注明出处

http://30daydo.com/article/503

requests直接post图片文件

李魔佛 发表了文章 • 0 个评论 • 3407 次浏览 • 2019-05-17 16:32

file_path=r'9927_15562445086485238.png'

file=open(file_path, 'rb').read()

r=requests.post(url=code_url,data=file)

print(r.text) 查看全部

file_path=r'9927_15562445086485238.png'

file=open(file_path, 'rb').read()

r=requests.post(url=code_url,data=file)

print(r.text)

正则表达式替换中文换行符【python】

李魔佛 发表了文章 • 0 个评论 • 2850 次浏览 • 2019-05-13 11:02

使用正则表达式替换换行符。(也可以替换为任意字符)js=re.sub('\r\n','',js)

完毕。

使用正则表达式替换换行符。(也可以替换为任意字符)

js=re.sub('\r\n','',js)完毕。

request header显示Provisional headers are shown

李魔佛 发表了文章 • 0 个评论 • 4839 次浏览 • 2019-05-13 10:07

把插件卸载了问题就解决了。

把插件卸载了问题就解决了。

异步爬虫aiohttp post提交数据

李魔佛 发表了文章 • 0 个评论 • 7716 次浏览 • 2019-05-08 16:40

async with session.post(url=url, data=data, headers=headers) as response:

return await response.json()

完整的例子:import aiohttp

import asyncio

page = 30

post_data = {

'page': 1,

'pageSize': 10,

'keyWord': '',

'dpIds': '',

}

headers = {

"Accept-Encoding": "gzip, deflate",

"Accept-Language": "en-US,en;q=0.9",

"User-Agent": "Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.108 Safari/537.36",

"X-Requested-With": "XMLHttpRequest",

}

result=

async def fetch(session,url, data):

async with session.post(url=url, data=data, headers=headers) as response:

return await response.json()

async def parse(html):

xzcf_list = html.get('newtxzcfList')

if xzcf_list is None:

return

for i in xzcf_list:

result.append(i)

async def downlod(page):

data=post_data.copy()

data['page']=page

url = 'http://credit.chaozhou.gov.cn/tfieldTypeActionJson!initXzcfListnew.do'

async with aiohttp.ClientSession() as session:

html=await fetch(session,url,data)

await parse(html)

loop = asyncio.get_event_loop()

tasks=[asyncio.ensure_future(downlod(i)) for i in range(1,page)]

tasks=asyncio.gather(*tasks)

# print(tasks)

loop.run_until_complete(tasks)

# loop.close()

# print(result)

count=0

for i in result:

print(i.get('cfXdrMc'))

count+=1

print(f'total {count}') 查看全部

async def fetch(session,url, data):

async with session.post(url=url, data=data, headers=headers) as response:

return await response.json()

完整的例子:

import aiohttp

import asyncio

page = 30

post_data = {

'page': 1,

'pageSize': 10,

'keyWord': '',

'dpIds': '',

}

headers = {

"Accept-Encoding": "gzip, deflate",

"Accept-Language": "en-US,en;q=0.9",

"User-Agent": "Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.108 Safari/537.36",

"X-Requested-With": "XMLHttpRequest",

}

result=

async def fetch(session,url, data):

async with session.post(url=url, data=data, headers=headers) as response:

return await response.json()

async def parse(html):

xzcf_list = html.get('newtxzcfList')

if xzcf_list is None:

return

for i in xzcf_list:

result.append(i)

async def downlod(page):

data=post_data.copy()

data['page']=page

url = 'http://credit.chaozhou.gov.cn/tfieldTypeActionJson!initXzcfListnew.do'

async with aiohttp.ClientSession() as session:

html=await fetch(session,url,data)

await parse(html)

loop = asyncio.get_event_loop()

tasks=[asyncio.ensure_future(downlod(i)) for i in range(1,page)]

tasks=asyncio.gather(*tasks)

# print(tasks)

loop.run_until_complete(tasks)

# loop.close()

# print(result)

count=0

for i in result:

print(i.get('cfXdrMc'))

count+=1

print(f'total {count}')

python异步aiohttp爬虫 - 异步爬取链家数据

李魔佛 发表了文章 • 0 个评论 • 2720 次浏览 • 2019-05-08 15:52

from lxml import etree

import asyncio

import aiohttp

import pandas

import re

import math

import time

loction_info = ''' 1→杭州

2→武汉

3→北京

按ENTER确认:'''

loction_select = input(loction_info)

loction_dic = {'1': 'hz',

'2': 'wh',

'3': 'bj'}

city_url = 'https://{}.lianjia.com/ershoufang/'.format(loction_dic[loction_select])

down = input('请输入价格下限(万):')

up = input('请输入价格上限(万):')

inter_list = [(int(down), int(up))]

def half_inter(inter):

lower = inter[0]

upper = inter[1]

delta = int((upper - lower) / 2)

inter_list.remove(inter)

print('已经缩小价格区间', inter)

inter_list.append((lower, lower + delta))

inter_list.append((lower + delta, upper))

pagenum = {}

def get_num(inter):

url = city_url + 'bp{}ep{}/'.format(inter[0], inter[1])

r = requests.get(url).text

print(r)

num = int(etree.HTML(r).xpath("//h2[@class='total fl']/span/text()")[0].strip())

pagenum[(inter[0], inter[1])] = num

return num

totalnum = get_num(inter_list[0])

judge = True

while judge:

a = [get_num(x) > 3000 for x in inter_list]

if True in a:

judge = True

else:

judge = False

for i in inter_list:

if get_num(i) > 3000:

half_inter(i)

print('价格区间缩小完毕!')

url_lst = []

url_lst_failed = []

url_lst_successed = []

url_lst_duplicated = []

for i in inter_list:

totalpage = math.ceil(pagenum[i] / 30)

for j in range(1, totalpage + 1):

url = city_url + 'pg{}bp{}ep{}/'.format(j, i[0], i[1])

url_lst.append(url)

print('url列表获取完毕!')

info_lst = []

async def get_info(url):

async with aiohttp.ClientSession() as session:

async with session.get(url, timeout=5) as resp:

if resp.status != 200:

url_lst_failed.append(url)

else:

url_lst_successed.append(url)

r = await resp.text()

nodelist = etree.HTML(r).xpath("//ul[@class='sellListContent']/li")

# print('-------------------------------------------------------------')

# print('开始抓取第{}个页面的数据,共计{}个页面'.format(url_lst.index(url),len(url_lst)))

# print('开始抓取第{}个页面的数据,共计{}个页面'.format(url_lst.index(url), len(url_lst)))

# print('开始抓取第{}个页面的数据,共计{}个页面'.format(url_lst.index(url), len(url_lst)))

# print('-------------------------------------------------------------')

info_dic = {}

index = 1

print('开始抓取{}'.format(resp.url))

print('开始抓取{}'.format(resp.url))

print('开始抓取{}'.format(resp.url))

for node in nodelist:

try:

info_dic['title'] = node.xpath(".//div[@class='title']/a/text()")[0]

except:

info_dic['title'] = '/'

try:

info_dic['href'] = node.xpath(".//div[@class='title']/a/@href")[0]

except:

info_dic['href'] = '/'

try:

info_dic['xiaoqu'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[0]

except:

info_dic['xiaoqu'] = '/'

try:

info_dic['huxing'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[1]

except:

info_dic['huxing'] = '/'

try:

info_dic['area'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[2]

except:

info_dic['area'] = '/'

try:

info_dic['chaoxiang'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[3]

except:

info_dic['chaoxiang'] = '/'

try:

info_dic['zhuangxiu'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[4]

except:

info_dic['zhuangxiu'] = '/'

try:

info_dic['dianti'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[5]

except:

info_dic['dianti'] = '/'

try:

info_dic['louceng'] = re.findall('\((.*)\)', node.xpath(".//div[@class='positionInfo']/text()")[0])

except:

info_dic['louceng'] = '/'

try:

info_dic['nianxian'] = re.findall('\)(.*?)年', node.xpath(".//div[@class='positionInfo']/text()")[0])

except:

info_dic['nianxian'] = '/'

try:

info_dic['guanzhu'] = ''.join(re.findall('[0-9]', node.xpath(".//div[@class='followInfo']/text()")[

0].replace(' ', '').split('/')[0]))

except:

info_dic['guanzhu'] = '/'

try:

info_dic['daikan'] = ''.join(re.findall('[0-9]',

node.xpath(".//div[@class='followInfo']/text()")[0].replace(

' ', '').split('/')[1]))

except:

info_dic['daikan'] = '/'

try:

info_dic['fabu'] = node.xpath(".//div[@class='followInfo']/text()")[0].replace(' ', '').split('/')[

2]

except:

info_dic['fabu'] = '/'

try:

info_dic['totalprice'] = node.xpath(".//div[@class='totalPrice']/span/text()")[0]

except:

info_dic['totalprice'] = '/'

try:

info_dic['unitprice'] = node.xpath(".//div[@class='unitPrice']/span/text()")[0].replace('单价', '')

except:

info_dic['unitprice'] = '/'

if True in [info_dic['href'] in dic.values() for dic in info_lst]:

url_lst_duplicated.append(info_dic)

else:

info_lst.append(info_dic)

print('第{}条: {}→房屋信息抓取完毕!'.format(index, info_dic['title']))

index += 1

info_dic = {}

start = time.time()

# 首次抓取url_lst中的信息,部分url没有对其发起请求,不知道为什么

tasks = [asyncio.ensure_future(get_info(url)) for url in url_lst]

loop = asyncio.get_event_loop()

loop.run_until_complete(asyncio.wait(tasks))

# 将没有发起请求的url放入一个列表,对其进行循环抓取,直到所有url都被发起请求

url_lst_unrequested = []

for url in url_lst:

if url not in url_lst_successed or url_lst_failed:

url_lst_unrequested.append(url)

while len(url_lst_unrequested) > 0:

tasks_unrequested = [asyncio.ensure_future(get_info(url)) for url in url_lst_unrequested]

loop.run_until_complete(asyncio.wait(tasks_unrequested))

url_lst_unrequested = []

for url in url_lst:

if url not in url_lst_successed:

url_lst_unrequested.append(url)

end = time.time()

print('当前价格区间段内共有{}套二手房源\(包含{}条重复房源\),实际获得{}条房源信息。'.format(totalnum, len(url_lst_duplicated), len(info_lst)))

print('总共耗时{}秒'.format(end - start))

df = pandas.DataFrame(info_lst)

df.to_csv("ljwh.csv", encoding='gbk') 查看全部

import requests

from lxml import etree

import asyncio

import aiohttp

import pandas

import re

import math

import time

loction_info = ''' 1→杭州

2→武汉

3→北京

按ENTER确认:'''

loction_select = input(loction_info)

loction_dic = {'1': 'hz',

'2': 'wh',

'3': 'bj'}

city_url = 'https://{}.lianjia.com/ershoufang/'.format(loction_dic[loction_select])

down = input('请输入价格下限(万):')

up = input('请输入价格上限(万):')

inter_list = [(int(down), int(up))]

def half_inter(inter):

lower = inter[0]

upper = inter[1]

delta = int((upper - lower) / 2)

inter_list.remove(inter)

print('已经缩小价格区间', inter)

inter_list.append((lower, lower + delta))

inter_list.append((lower + delta, upper))

pagenum = {}

def get_num(inter):

url = city_url + 'bp{}ep{}/'.format(inter[0], inter[1])

r = requests.get(url).text

print(r)

num = int(etree.HTML(r).xpath("//h2[@class='total fl']/span/text()")[0].strip())

pagenum[(inter[0], inter[1])] = num

return num

totalnum = get_num(inter_list[0])

judge = True

while judge:

a = [get_num(x) > 3000 for x in inter_list]

if True in a:

judge = True

else:

judge = False

for i in inter_list:

if get_num(i) > 3000:

half_inter(i)

print('价格区间缩小完毕!')

url_lst = []

url_lst_failed = []

url_lst_successed = []

url_lst_duplicated = []

for i in inter_list:

totalpage = math.ceil(pagenum[i] / 30)

for j in range(1, totalpage + 1):

url = city_url + 'pg{}bp{}ep{}/'.format(j, i[0], i[1])

url_lst.append(url)

print('url列表获取完毕!')

info_lst = []

async def get_info(url):

async with aiohttp.ClientSession() as session:

async with session.get(url, timeout=5) as resp:

if resp.status != 200:

url_lst_failed.append(url)

else:

url_lst_successed.append(url)

r = await resp.text()

nodelist = etree.HTML(r).xpath("//ul[@class='sellListContent']/li")

# print('-------------------------------------------------------------')

# print('开始抓取第{}个页面的数据,共计{}个页面'.format(url_lst.index(url),len(url_lst)))

# print('开始抓取第{}个页面的数据,共计{}个页面'.format(url_lst.index(url), len(url_lst)))

# print('开始抓取第{}个页面的数据,共计{}个页面'.format(url_lst.index(url), len(url_lst)))

# print('-------------------------------------------------------------')

info_dic = {}

index = 1

print('开始抓取{}'.format(resp.url))

print('开始抓取{}'.format(resp.url))

print('开始抓取{}'.format(resp.url))

for node in nodelist:

try:

info_dic['title'] = node.xpath(".//div[@class='title']/a/text()")[0]

except:

info_dic['title'] = '/'

try:

info_dic['href'] = node.xpath(".//div[@class='title']/a/@href")[0]

except:

info_dic['href'] = '/'

try:

info_dic['xiaoqu'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[0]

except:

info_dic['xiaoqu'] = '/'

try:

info_dic['huxing'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[1]

except:

info_dic['huxing'] = '/'

try:

info_dic['area'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[2]

except:

info_dic['area'] = '/'

try:

info_dic['chaoxiang'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[3]

except:

info_dic['chaoxiang'] = '/'

try:

info_dic['zhuangxiu'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[4]

except:

info_dic['zhuangxiu'] = '/'

try:

info_dic['dianti'] = \

node.xpath(".//div[@class='houseInfo']")[0].xpath('string(.)').replace(' ', '').split('|')[5]

except:

info_dic['dianti'] = '/'

try:

info_dic['louceng'] = re.findall('\((.*)\)', node.xpath(".//div[@class='positionInfo']/text()")[0])

except:

info_dic['louceng'] = '/'

try:

info_dic['nianxian'] = re.findall('\)(.*?)年', node.xpath(".//div[@class='positionInfo']/text()")[0])

except:

info_dic['nianxian'] = '/'

try:

info_dic['guanzhu'] = ''.join(re.findall('[0-9]', node.xpath(".//div[@class='followInfo']/text()")[

0].replace(' ', '').split('/')[0]))

except:

info_dic['guanzhu'] = '/'

try:

info_dic['daikan'] = ''.join(re.findall('[0-9]',

node.xpath(".//div[@class='followInfo']/text()")[0].replace(

' ', '').split('/')[1]))

except:

info_dic['daikan'] = '/'

try:

info_dic['fabu'] = node.xpath(".//div[@class='followInfo']/text()")[0].replace(' ', '').split('/')[

2]

except:

info_dic['fabu'] = '/'

try:

info_dic['totalprice'] = node.xpath(".//div[@class='totalPrice']/span/text()")[0]

except:

info_dic['totalprice'] = '/'

try:

info_dic['unitprice'] = node.xpath(".//div[@class='unitPrice']/span/text()")[0].replace('单价', '')

except:

info_dic['unitprice'] = '/'

if True in [info_dic['href'] in dic.values() for dic in info_lst]:

url_lst_duplicated.append(info_dic)

else:

info_lst.append(info_dic)

print('第{}条: {}→房屋信息抓取完毕!'.format(index, info_dic['title']))

index += 1

info_dic = {}

start = time.time()

# 首次抓取url_lst中的信息,部分url没有对其发起请求,不知道为什么

tasks = [asyncio.ensure_future(get_info(url)) for url in url_lst]

loop = asyncio.get_event_loop()

loop.run_until_complete(asyncio.wait(tasks))

# 将没有发起请求的url放入一个列表,对其进行循环抓取,直到所有url都被发起请求

url_lst_unrequested = []

for url in url_lst:

if url not in url_lst_successed or url_lst_failed:

url_lst_unrequested.append(url)

while len(url_lst_unrequested) > 0:

tasks_unrequested = [asyncio.ensure_future(get_info(url)) for url in url_lst_unrequested]

loop.run_until_complete(asyncio.wait(tasks_unrequested))

url_lst_unrequested = []

for url in url_lst:

if url not in url_lst_successed:

url_lst_unrequested.append(url)

end = time.time()

print('当前价格区间段内共有{}套二手房源\(包含{}条重复房源\),实际获得{}条房源信息。'.format(totalnum, len(url_lst_duplicated), len(info_lst)))

print('总共耗时{}秒'.format(end - start))

df = pandas.DataFrame(info_lst)

df.to_csv("ljwh.csv", encoding='gbk')

pycharm debug scrapy 报错 twisted.internet.error.ReactorNotRestartable

李魔佛 发表了文章 • 0 个评论 • 6003 次浏览 • 2019-04-23 11:35

后来才发现,

scrapy run的启动文件名不能命令为cmd.py !!!!!

我把scrapy的启动写到cmd.py里面

from scrapy import cmdline cmdline.execute('scrapy crawl xxxx'.split())

然后cmd.py和系统某个调试功能的库重名了。 查看全部

后来才发现,

scrapy run的启动文件名不能命令为cmd.py !!!!!

我把scrapy的启动写到cmd.py里面

from scrapy import cmdline cmdline.execute('scrapy crawl xxxx'.split())

然后cmd.py和系统某个调试功能的库重名了。

CentOS Zookeeper无法启动:Error contacting service,It is probably not running

李魔佛 发表了文章 • 0 个评论 • 4427 次浏览 • 2019-04-09 19:20

./kafka-server-start.sh -daemon ../config/server.properties

报错:

Error contacting service,It is probably not running

关闭重启,杀进程,看端口是否被占用。无果。

后来看了下防火墙,OMG,有一台机子的防火墙没有关闭。

手工关闭后问题就解决了。

关闭防火墙命令:

systemctl stop firewalld.service #关闭防火墙

systemctl disable firewalld.service #禁止启动防火墙 查看全部

./kafka-server-start.sh -daemon ../config/server.properties报错:

Error contacting service,It is probably not running

关闭重启,杀进程,看端口是否被占用。无果。

后来看了下防火墙,OMG,有一台机子的防火墙没有关闭。

手工关闭后问题就解决了。

关闭防火墙命令:

systemctl stop firewalld.service #关闭防火墙

systemctl disable firewalld.service #禁止启动防火墙

【python】pymongo find_one_and_update的用法

李魔佛 发表了文章 • 0 个评论 • 13722 次浏览 • 2019-04-04 11:31

<filter>,

<update>,

{

projection: <document>,

sort: <document>,

maxTimeMS: <number>,

upsert: <boolean>,

returnNewDocument: <boolean>,

collation: <document>,

arrayFilters: [ <filterdocument1>, ... ]

}

)

转换成python pymongo是这样的:>>> db.example.find_one_and_update(

... {'_id': 'userid'},

... {'$inc': {'seq': 1}},

... projection={'seq': True, '_id': False},

... return_document=ReturnDocument.AFTER)

上面的语句的意思是:

找到_id 为userid的值得文档,然后把该文档中的seq的值+1,然后返回seq的数据,不显示_id列

最后返回的数据是这样的:

{'seq': 2}

注意

findOneAndUpdate

是获取mongo文档中第一条满足条件的数据并做修改。该函数是线程安全的。意思就是在多个线程中操作,不会对同一条数据进行获取修改。

也就是该操作是原子操作。

ReturnDocument 引用的库

class pymongo.collection.ReturnDocument

在开头 from pymongo.collection import ReturnDocument

原创文章

转载请注明出处:

http://30daydo.com/article/445 查看全部

db.collection.findOneAndUpdate(

<filter>,

<update>,

{

projection: <document>,

sort: <document>,

maxTimeMS: <number>,

upsert: <boolean>,

returnNewDocument: <boolean>,

collation: <document>,

arrayFilters: [ <filterdocument1>, ... ]

}

)

转换成python pymongo是这样的:

>>> db.example.find_one_and_update(

... {'_id': 'userid'},

... {'$inc': {'seq': 1}},

... projection={'seq': True, '_id': False},

... return_document=ReturnDocument.AFTER)

上面的语句的意思是:

找到_id 为userid的值得文档,然后把该文档中的seq的值+1,然后返回seq的数据,不显示_id列

最后返回的数据是这样的:

{'seq': 2}

注意

findOneAndUpdate

是获取mongo文档中第一条满足条件的数据并做修改。该函数是线程安全的。意思就是在多个线程中操作,不会对同一条数据进行获取修改。

也就是该操作是原子操作。

ReturnDocument 引用的库

class pymongo.collection.ReturnDocument

在开头 from pymongo.collection import ReturnDocument

原创文章

转载请注明出处:

http://30daydo.com/article/445

scrapy命令行执行传递多个参数给spider 动态传参

李魔佛 发表了文章 • 0 个评论 • 6911 次浏览 • 2019-03-28 11:24

那么需要在spider中定义一个构造函数

def __init__(self,page=None,*args, **kwargs):

super(Gaode,self).__init__(*args, **kwargs)

self.page=page

def start_requests(self):

XXXXXX 调用self.page 即可

yield Request(XXXX)

然后在启动scrapy的时候赋予参数的值:

scrapy crawl spider -a page=10

就可以动态传入参数

原创文章

转载请注明出处:http://30daydo.com/article/436

查看全部

那么需要在spider中定义一个构造函数

def __init__(self,page=None,*args, **kwargs):

super(Gaode,self).__init__(*args, **kwargs)

self.page=page

def start_requests(self):

XXXXXX 调用self.page 即可

yield Request(XXXX)

然后在启动scrapy的时候赋予参数的值:

scrapy crawl spider -a page=10

就可以动态传入参数

原创文章

转载请注明出处:http://30daydo.com/article/436

scrapyd 日志文件中文乱码 解决方案

李魔佛 发表了文章 • 0 个评论 • 4279 次浏览 • 2019-03-27 17:13

网上一般的解决方法是修改scrapyd的源码,增加一个utf8的编码页面,需要重新写一个html的页面框架,对于一般只是看看日志的朋友来说,没必要这么大刀阔斧的。

可以直接使用postman来打开日志文件,里面的中文是正常的。

查看全部

Linux下自制有道词典 - python 解密有道词典JS加密

李魔佛 发表了文章 • 0 个评论 • 4433 次浏览 • 2019-02-23 20:17

平时在linux下开发,鉴于没有什么好用翻译软件,打开网易也占用系统资源,所以写了个在控制台的翻译软件接口。

使用python爬虫,查看网页的JS加密方法,一步一步地分析,就能够得到最后的加密方法啦。

直接给出代码:

# -*- coding: utf-8 -*-

# website: http://30daydo.com

# @Time : 2019/2/23 19:34

# @File : youdao.py

# 解密有道词典的JS

import hashlib

import random

import requests

import time

def md5_(word):

s = bytes(word, encoding='utf8')

m = hashlib.md5()

m.update(s)

ret = m.hexdigest()

return ret

def get_sign(word, salt):

ret = md5_('fanyideskweb' + word + salt + 'p09@Bn{h02_BIEe]$P^nG')

return ret

def youdao(word):

url = 'http://fanyi.youdao.com/translate_o?smartresult=dict&smartresult=rule'

headers = {

'Host': 'fanyi.youdao.com',

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:47.0) Gecko/20100101 Firefox/47.0',

'Accept': 'application/json, text/javascript, */*; q=0.01',

'Accept-Language': 'zh-CN,zh;q=0.8,en-US;q=0.5,en;q=0.3',

'Accept-Encoding': 'gzip, deflate',

'Content-Type': 'application/x-www-form-urlencoded; charset=UTF-8',

'X-Requested-With': 'XMLHttpRequest',

'Referer': 'http://fanyi.youdao.com/',

'Content-Length': '252',

'Cookie': 'YOUDAO_MOBILE_ACCESS_TYPE=1; OUTFOX_SEARCH_USER_ID=1672542763@10.169.0.83; JSESSIONID=aaaWzxpjeDu1gbhopLzKw; ___rl__test__cookies=1550913722828; OUTFOX_SEARCH_USER_ID_NCOO=372126049.6326876',

'Connection': 'keep-alive',

'Pragma': 'no-cache',

'Cache-Control': 'no-cache',

}

ts = str(int(time.time()*1000))

salt=ts+str(random.randint(0,10))

bv = md5_("5.0 (Windows)")

sign= get_sign(word,salt)

post_data = {

'i': word,

'from': 'AUTO', 'to': 'AUTO', 'smartresult': 'dict', 'client': 'fanyideskweb', 'salt': salt,

'sign': sign, 'ts': ts, 'bv': bv, 'doctype': 'json', 'version': '2.1',

'keyfrom': 'fanyi.web', 'action': 'FY_BY_REALTIME', 'typoResult': 'false'

}

r = requests.post(

url=url,

headers=headers,

data=post_data

)

for item in r.json().get('smartResult',{}).get('entries'):

print(item)

word='student'

youdao(word)

得到结果:

Github:

https://github.com/Rockyzsu/CrawlMan/tree/master/youdao_dictionary

原创文章,转载请注明出处

http://30daydo.com/article/416 查看全部

平时在linux下开发,鉴于没有什么好用翻译软件,打开网易也占用系统资源,所以写了个在控制台的翻译软件接口。

使用python爬虫,查看网页的JS加密方法,一步一步地分析,就能够得到最后的加密方法啦。

直接给出代码:

# -*- coding: utf-8 -*-

# website: http://30daydo.com

# @Time : 2019/2/23 19:34

# @File : youdao.py

# 解密有道词典的JS

import hashlib

import random

import requests

import time

def md5_(word):

s = bytes(word, encoding='utf8')

m = hashlib.md5()

m.update(s)

ret = m.hexdigest()

return ret

def get_sign(word, salt):

ret = md5_('fanyideskweb' + word + salt + 'p09@Bn{h02_BIEe]$P^nG')

return ret

def youdao(word):

url = 'http://fanyi.youdao.com/translate_o?smartresult=dict&smartresult=rule'

headers = {

'Host': 'fanyi.youdao.com',

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:47.0) Gecko/20100101 Firefox/47.0',

'Accept': 'application/json, text/javascript, */*; q=0.01',

'Accept-Language': 'zh-CN,zh;q=0.8,en-US;q=0.5,en;q=0.3',

'Accept-Encoding': 'gzip, deflate',

'Content-Type': 'application/x-www-form-urlencoded; charset=UTF-8',

'X-Requested-With': 'XMLHttpRequest',

'Referer': 'http://fanyi.youdao.com/',

'Content-Length': '252',

'Cookie': 'YOUDAO_MOBILE_ACCESS_TYPE=1; OUTFOX_SEARCH_USER_ID=1672542763@10.169.0.83; JSESSIONID=aaaWzxpjeDu1gbhopLzKw; ___rl__test__cookies=1550913722828; OUTFOX_SEARCH_USER_ID_NCOO=372126049.6326876',

'Connection': 'keep-alive',

'Pragma': 'no-cache',

'Cache-Control': 'no-cache',

}

ts = str(int(time.time()*1000))

salt=ts+str(random.randint(0,10))

bv = md5_("5.0 (Windows)")

sign= get_sign(word,salt)

post_data = {

'i': word,

'from': 'AUTO', 'to': 'AUTO', 'smartresult': 'dict', 'client': 'fanyideskweb', 'salt': salt,

'sign': sign, 'ts': ts, 'bv': bv, 'doctype': 'json', 'version': '2.1',

'keyfrom': 'fanyi.web', 'action': 'FY_BY_REALTIME', 'typoResult': 'false'

}

r = requests.post(

url=url,

headers=headers,

data=post_data

)

for item in r.json().get('smartResult',{}).get('entries'):

print(item)

word='student'

youdao(word)

得到结果:

Github:

https://github.com/Rockyzsu/CrawlMan/tree/master/youdao_dictionary

原创文章,转载请注明出处

http://30daydo.com/article/416

scrapy response转化为图片

李魔佛 发表了文章 • 0 个评论 • 3857 次浏览 • 2019-02-01 14:39

with open('temp.jpg','wb') as f:

f.write(reponse.body)

即可。

查看全部

with open('temp.jpg','wb') as f:

f.write(reponse.body)

即可。

拉勾网的反爬策略

李魔佛 发表了文章 • 0 个评论 • 3561 次浏览 • 2019-01-23 10:18

(请注意日期,因为不保证往后的日子里面反爬策略还有效)

1. 封IP,这个没的说,肯定要使用代理IP

2. scrapy里面的需要添加headers,而headers中一定要加上Cookies的数据。 之前要做Request中的cookies参数添加cookies,现在发现失效了,只能在headers中添加cookies数据。

headers = {'Accept': 'application/json,text/javascript,*/*;q=0.01', 'Accept-Encoding':

'gzip,deflate,br',

'Accept-Language': 'zh-CN,zh;q=0.9,en;q=0.8', 'Cache-Control': 'no-cache',

# 'Connection': 'keep-alive',

'Content-Type': 'application/x-www-form-urlencoded;charset=UTF-8',

'Cookie': 'JSESSIONID=ABAAABAABEEAAJAACF8F22F99AFA35F9EEC28F2D0E46A41;_ga=GA1.2.331323650.1548204973;_gat=1;Hm_lvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1548204973;user_trace_token=20190123085612-adf35b62-1ea9-11e9-b744-5254005c3644;LGSID=20190123085612-adf35c69-1ea9-11e9-b744-5254005c3644;PRE_UTM=;PRE_HOST=;PRE_SITE=;PRE_LAND=https%3A%2F%2Fwww.lagou.com%2F;LGUID=20190123085612-adf35ed5-1ea9-11e9-b744-5254005c3644;_gid=GA1.2.1809874038.1548204973;index_location_city=%E6%B7%B1%E5%9C%B3;TG-TRACK-CODE=index_search;SEARCH_ID=169bf76c08b548f8830967a1968d10ca;Hm_lpvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1548204985;LGRID=20190123085624-b52a0555-1ea9-11e9-b744-5254005c3644',

'Host': 'www.lagou.com', 'Origin': 'https://www.lagou.com', 'Pragma': 'no-cache',

'Referer': 'https://www.lagou.com/jobs/list_%E7%88%AC%E8%99%AB?labelWords=&fromSearch=true&suginput=',

'User-Agent': 'Mozilla/5.0(WindowsNT6.3;WOW64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/71.0.3578.98Safari/537.36',

'X-Anit-Forge-Code': '0',

'X-Anit-Forge-Token': 'None',

'X-Requested-With': 'XMLHttpRequest'

} 查看全部

(请注意日期,因为不保证往后的日子里面反爬策略还有效)

1. 封IP,这个没的说,肯定要使用代理IP

2. scrapy里面的需要添加headers,而headers中一定要加上Cookies的数据。 之前要做Request中的cookies参数添加cookies,现在发现失效了,只能在headers中添加cookies数据。

headers = {'Accept': 'application/json,text/javascript,*/*;q=0.01', 'Accept-Encoding':

'gzip,deflate,br',

'Accept-Language': 'zh-CN,zh;q=0.9,en;q=0.8', 'Cache-Control': 'no-cache',

# 'Connection': 'keep-alive',

'Content-Type': 'application/x-www-form-urlencoded;charset=UTF-8',

'Cookie': 'JSESSIONID=ABAAABAABEEAAJAACF8F22F99AFA35F9EEC28F2D0E46A41;_ga=GA1.2.331323650.1548204973;_gat=1;Hm_lvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1548204973;user_trace_token=20190123085612-adf35b62-1ea9-11e9-b744-5254005c3644;LGSID=20190123085612-adf35c69-1ea9-11e9-b744-5254005c3644;PRE_UTM=;PRE_HOST=;PRE_SITE=;PRE_LAND=https%3A%2F%2Fwww.lagou.com%2F;LGUID=20190123085612-adf35ed5-1ea9-11e9-b744-5254005c3644;_gid=GA1.2.1809874038.1548204973;index_location_city=%E6%B7%B1%E5%9C%B3;TG-TRACK-CODE=index_search;SEARCH_ID=169bf76c08b548f8830967a1968d10ca;Hm_lpvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1548204985;LGRID=20190123085624-b52a0555-1ea9-11e9-b744-5254005c3644',

'Host': 'www.lagou.com', 'Origin': 'https://www.lagou.com', 'Pragma': 'no-cache',

'Referer': 'https://www.lagou.com/jobs/list_%E7%88%AC%E8%99%AB?labelWords=&fromSearch=true&suginput=',

'User-Agent': 'Mozilla/5.0(WindowsNT6.3;WOW64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/71.0.3578.98Safari/537.36',

'X-Anit-Forge-Code': '0',

'X-Anit-Forge-Token': 'None',

'X-Requested-With': 'XMLHttpRequest'

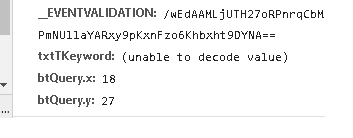

} 浏览器抓包post字段里面有 (unable to decode value) ,requests如何正确的post

李魔佛 发表了文章 • 0 个评论 • 6505 次浏览 • 2018-12-12 14:52

数据是通过post形式提交的, 字段txtTKeyword无法显示,看来是用了其他的编码导致了浏览器无法识别。

可以使用fiddler工具查看。

在python中用代码直接编码后post,不然服务器无法识别提交的数据

注意不需要用 urllib.parse.quote(uncode_str),直接encode就可以(特殊情况特殊处理,有些网站就是奇怪)

s='耐克球鞋'

s =s.encode('gb2312')

data = {'__VIEWSTATE': view_state,

'__EVENTVALIDATION': event_validation,

'txtTKeyword': s,

'btQuery.x': 41,

'btQuery.y': 24,

}

r = session.post(url=self.base_url, headers=headers,

data=data,proxies=self.get_proxy()

查看全部

数据是通过post形式提交的, 字段txtTKeyword无法显示,看来是用了其他的编码导致了浏览器无法识别。

可以使用fiddler工具查看。

在python中用代码直接编码后post,不然服务器无法识别提交的数据

注意不需要用 urllib.parse.quote(uncode_str),直接encode就可以(特殊情况特殊处理,有些网站就是奇怪)

s='耐克球鞋'

s =s.encode('gb2312')

data = {'__VIEWSTATE': view_state,

'__EVENTVALIDATION': event_validation,

'txtTKeyword': s,

'btQuery.x': 41,

'btQuery.y': 24,

}

r = session.post(url=self.base_url, headers=headers,

data=data,proxies=self.get_proxy()

批量获取Grequests返回内容

李魔佛 发表了文章 • 0 个评论 • 6266 次浏览 • 2018-11-23 10:36

如何批量获取Grequests返回内容?

import grequests

import requests

import bs4

def simple_request(url):

page = requests.get(url)

return page

urls = [

'http://www.heroku.com',

'http://python-tablib.org',

'http://httpbin.org',

'http://python-requests.org',

'http://kennethreitz.com'

]

rs = [grequests.get(simple_request(u)) for u in urls]

grequests.map(rs)

注意,上面的写法是错误的!!!!!!

grequests.get只能接受url!!! 不能放入一个函数。

正确的写法:

rs = (grequests.get(u) for u in urls)

requests = grequests.map(rs)

for response in requests:

market_watch(response.content)

具体的对response内容操作放入到market_watch函数中。

查看全部

如何批量获取Grequests返回内容?

import grequests

import requests

import bs4

def simple_request(url):

page = requests.get(url)

return page

urls = [

'http://www.heroku.com',

'http://python-tablib.org',

'http://httpbin.org',

'http://python-requests.org',

'http://kennethreitz.com'

]

rs = [grequests.get(simple_request(u)) for u in urls]

grequests.map(rs)

注意,上面的写法是错误的!!!!!!

grequests.get只能接受url!!! 不能放入一个函数。

正确的写法:

rs = (grequests.get(u) for u in urls)

requests = grequests.map(rs)

for response in requests:

market_watch(response.content)

具体的对response内容操作放入到market_watch函数中。

python爬虫集思录所有用户的帖子 scrapy写入mongodb数据库

李魔佛 发表了文章 • 0 个评论 • 6213 次浏览 • 2018-09-02 21:52

项目采用scrapy的框架,数据写入到mongodb的数据库。 整个站点爬下来大概用了半小时,数据有12w条。

项目中的主要代码如下:

主spider# -*- coding: utf-8 -*-

import re

import scrapy

from scrapy import Request, FormRequest

from jsl.items import JslItem

from jsl import config

import logging

class AllcontentSpider(scrapy.Spider):

name = 'allcontent'

headers = {

'Host': 'www.jisilu.cn', 'Connection': 'keep-alive', 'Pragma': 'no-cache',

'Cache-Control': 'no-cache', 'Accept': 'application/json,text/javascript,*/*;q=0.01',

'Origin': 'https://www.jisilu.cn', 'X-Requested-With': 'XMLHttpRequest',

'User-Agent': 'Mozilla/5.0(WindowsNT6.1;WOW64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/67.0.3396.99Safari/537.36',

'Content-Type': 'application/x-www-form-urlencoded;charset=UTF-8',

'Referer': 'https://www.jisilu.cn/login/',

'Accept-Encoding': 'gzip,deflate,br',

'Accept-Language': 'zh,en;q=0.9,en-US;q=0.8'

}

def start_requests(self):

login_url = 'https://www.jisilu.cn/login/'

headers = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8',

'Accept-Encoding': 'gzip,deflate,br', 'Accept-Language': 'zh,en;q=0.9,en-US;q=0.8',

'Cache-Control': 'no-cache', 'Connection': 'keep-alive',

'Host': 'www.jisilu.cn', 'Pragma': 'no-cache', 'Referer': 'https://www.jisilu.cn/',

'Upgrade-Insecure-Requests': '1',

'User-Agent': 'Mozilla/5.0(WindowsNT6.1;WOW64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/67.0.3396.99Safari/537.36'}

yield Request(url=login_url, headers=headers, callback=self.login,dont_filter=True)

def login(self, response):

url = 'https://www.jisilu.cn/account/ajax/login_process/'

data = {

'return_url': 'https://www.jisilu.cn/',

'user_name': config.username,

'password': config.password,

'net_auto_login': '1',

'_post_type': 'ajax',

}

yield FormRequest(

url=url,

headers=self.headers,

formdata=data,

callback=self.parse,

dont_filter=True

)

def parse(self, response):

for i in range(1,3726):

focus_url = 'https://www.jisilu.cn/home/explore/sort_type-new__day-0__page-{}'.format(i)

yield Request(url=focus_url, headers=self.headers, callback=self.parse_page,dont_filter=True)

def parse_page(self, response):

nodes = response.xpath('//div[@class="aw-question-list"]/div')

for node in nodes:

each_url=node.xpath('.//h4/a/@href').extract_first()

yield Request(url=each_url,headers=self.headers,callback=self.parse_item,dont_filter=True)

def parse_item(self,response):

item = JslItem()

title = response.xpath('//div[@class="aw-mod-head"]/h1/text()').extract_first()

s = response.xpath('//div[@class="aw-question-detail-txt markitup-box"]').xpath('string(.)').extract_first()

ret = re.findall('(.*?)\.donate_user_avatar', s, re.S)

try:

content = ret[0].strip()

except:

content = None

createTime = response.xpath('//div[@class="aw-question-detail-meta"]/span/text()').extract_first()

resp_no = response.xpath('//div[@class="aw-mod aw-question-detail-box"]//ul/h2/text()').re_first('\d+')

url = response.url

item['title'] = title.strip()

item['content'] = content

try:

item['resp_no']=int(resp_no)

except Exception as e:

logging.warning('e')

item['resp_no']=None

item['createTime'] = createTime

item['url'] = url.strip()

resp =

for index,reply in enumerate(response.xpath('//div[@class="aw-mod-body aw-dynamic-topic"]/div[@class="aw-item"]')):

replay_user = reply.xpath('.//div[@class="pull-left aw-dynamic-topic-content"]//p/a/text()').extract_first()

rep_content = reply.xpath(

'.//div[@class="pull-left aw-dynamic-topic-content"]//div[@class="markitup-box"]/text()').extract_first()

# print rep_content

agree=reply.xpath('.//em[@class="aw-border-radius-5 aw-vote-count pull-left"]/text()').extract_first()

resp.append({replay_user.strip()+'_{}'.format(index): [int(agree),rep_content.strip()]})

item['resp'] = resp

yield item

login函数是模拟登录集思录,通过抓包就可以知道一些上传的data。

然后就是分页去抓取。逻辑很简单。

然后pipeline里面写入mongodb。import pymongo

from collections import OrderedDict

class JslPipeline(object):

def __init__(self):

self.db = pymongo.MongoClient(host='10.18.6.1',port=27017)

# self.user = u'neo牛3' # 修改为指定的用户名 如 毛之川 ,然后找到用户的id,在用户也的源码哪里可以找到 比如持有封基是8132

self.collection = self.db['db_parker']['jsl']

def process_item(self, item, spider):

self.collection.insert(OrderedDict(item))

return item

抓取到的数据入库mongodb:

点击查看大图

原创文章

转载请注明出处:http://30daydo.com/publish/article/351

查看全部

项目采用scrapy的框架,数据写入到mongodb的数据库。 整个站点爬下来大概用了半小时,数据有12w条。

项目中的主要代码如下:

主spider

# -*- coding: utf-8 -*-

import re

import scrapy

from scrapy import Request, FormRequest

from jsl.items import JslItem

from jsl import config

import logging

class AllcontentSpider(scrapy.Spider):

name = 'allcontent'

headers = {

'Host': 'www.jisilu.cn', 'Connection': 'keep-alive', 'Pragma': 'no-cache',

'Cache-Control': 'no-cache', 'Accept': 'application/json,text/javascript,*/*;q=0.01',

'Origin': 'https://www.jisilu.cn', 'X-Requested-With': 'XMLHttpRequest',

'User-Agent': 'Mozilla/5.0(WindowsNT6.1;WOW64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/67.0.3396.99Safari/537.36',

'Content-Type': 'application/x-www-form-urlencoded;charset=UTF-8',

'Referer': 'https://www.jisilu.cn/login/',

'Accept-Encoding': 'gzip,deflate,br',

'Accept-Language': 'zh,en;q=0.9,en-US;q=0.8'

}

def start_requests(self):

login_url = 'https://www.jisilu.cn/login/'

headers = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8',

'Accept-Encoding': 'gzip,deflate,br', 'Accept-Language': 'zh,en;q=0.9,en-US;q=0.8',

'Cache-Control': 'no-cache', 'Connection': 'keep-alive',

'Host': 'www.jisilu.cn', 'Pragma': 'no-cache', 'Referer': 'https://www.jisilu.cn/',

'Upgrade-Insecure-Requests': '1',

'User-Agent': 'Mozilla/5.0(WindowsNT6.1;WOW64)AppleWebKit/537.36(KHTML,likeGecko)Chrome/67.0.3396.99Safari/537.36'}

yield Request(url=login_url, headers=headers, callback=self.login,dont_filter=True)

def login(self, response):

url = 'https://www.jisilu.cn/account/ajax/login_process/'

data = {

'return_url': 'https://www.jisilu.cn/',

'user_name': config.username,

'password': config.password,

'net_auto_login': '1',

'_post_type': 'ajax',

}

yield FormRequest(

url=url,

headers=self.headers,

formdata=data,

callback=self.parse,

dont_filter=True

)

def parse(self, response):

for i in range(1,3726):

focus_url = 'https://www.jisilu.cn/home/explore/sort_type-new__day-0__page-{}'.format(i)

yield Request(url=focus_url, headers=self.headers, callback=self.parse_page,dont_filter=True)

def parse_page(self, response):

nodes = response.xpath('//div[@class="aw-question-list"]/div')

for node in nodes:

each_url=node.xpath('.//h4/a/@href').extract_first()

yield Request(url=each_url,headers=self.headers,callback=self.parse_item,dont_filter=True)

def parse_item(self,response):

item = JslItem()

title = response.xpath('//div[@class="aw-mod-head"]/h1/text()').extract_first()

s = response.xpath('//div[@class="aw-question-detail-txt markitup-box"]').xpath('string(.)').extract_first()

ret = re.findall('(.*?)\.donate_user_avatar', s, re.S)

try:

content = ret[0].strip()

except:

content = None

createTime = response.xpath('//div[@class="aw-question-detail-meta"]/span/text()').extract_first()

resp_no = response.xpath('//div[@class="aw-mod aw-question-detail-box"]//ul/h2/text()').re_first('\d+')

url = response.url

item['title'] = title.strip()

item['content'] = content

try:

item['resp_no']=int(resp_no)

except Exception as e:

logging.warning('e')

item['resp_no']=None

item['createTime'] = createTime

item['url'] = url.strip()

resp =

for index,reply in enumerate(response.xpath('//div[@class="aw-mod-body aw-dynamic-topic"]/div[@class="aw-item"]')):

replay_user = reply.xpath('.//div[@class="pull-left aw-dynamic-topic-content"]//p/a/text()').extract_first()

rep_content = reply.xpath(

'.//div[@class="pull-left aw-dynamic-topic-content"]//div[@class="markitup-box"]/text()').extract_first()

# print rep_content

agree=reply.xpath('.//em[@class="aw-border-radius-5 aw-vote-count pull-left"]/text()').extract_first()

resp.append({replay_user.strip()+'_{}'.format(index): [int(agree),rep_content.strip()]})

item['resp'] = resp

yield item

login函数是模拟登录集思录,通过抓包就可以知道一些上传的data。

然后就是分页去抓取。逻辑很简单。

然后pipeline里面写入mongodb。

import pymongo

from collections import OrderedDict

class JslPipeline(object):

def __init__(self):

self.db = pymongo.MongoClient(host='10.18.6.1',port=27017)

# self.user = u'neo牛3' # 修改为指定的用户名 如 毛之川 ,然后找到用户的id,在用户也的源码哪里可以找到 比如持有封基是8132

self.collection = self.db['db_parker']['jsl']

def process_item(self, item, spider):

self.collection.insert(OrderedDict(item))

return item

抓取到的数据入库mongodb:

点击查看大图

原创文章

转载请注明出处:http://30daydo.com/publish/article/351

scrapy记录日志的最新方法

李魔佛 发表了文章 • 0 个评论 • 4142 次浏览 • 2018-08-15 15:01

log.msg("This is a warning", level=log.WARING)

在Spider中添加log

在spider中添加log的推荐方式是使用Spider的 log() 方法。该方法会自动在调用 scrapy.log.start() 时赋值 spider 参数。

其它的参数则直接传递给 msg() 方法

scrapy.log模块scrapy.log.start(logfile=None, loglevel=None, logstdout=None)启动log功能。该方法必须在记录任何信息之前被调用。否则调用前的信息将会丢失。

但是运行的时候出现警告:

[py.warnings] WARNING: E:\git\CrawlMan\bilibili\bilibili\spiders\bili.py:14: ScrapyDeprecationWarning: log.msg has been deprecated, create a python logger and log through it instead

log.msg

原来官方以及不推荐使用log.msg了

最新的用法:# -*- coding: utf-8 -*-

import scrapy

from scrapy_splash import SplashRequest

import logging

# from scrapy import log

class BiliSpider(scrapy.Spider):

name = 'ordinary' # 这个名字就是上面连接中那个启动应用的名字

allowed_domain = ["bilibili.com"]

start_urls = [

"https://www.bilibili.com/"

]

def parse(self, response):

logging.info('====================================================')

content = response.xpath("//div[@class='num-wrap']").extract_first()

logging.info(content)

logging.info('====================================================') 查看全部

from scrapy import log

log.msg("This is a warning", level=log.WARING)

在Spider中添加log

在spider中添加log的推荐方式是使用Spider的 log() 方法。该方法会自动在调用 scrapy.log.start() 时赋值 spider 参数。

其它的参数则直接传递给 msg() 方法

scrapy.log模块scrapy.log.start(logfile=None, loglevel=None, logstdout=None)启动log功能。该方法必须在记录任何信息之前被调用。否则调用前的信息将会丢失。

但是运行的时候出现警告:

[py.warnings] WARNING: E:\git\CrawlMan\bilibili\bilibili\spiders\bili.py:14: ScrapyDeprecationWarning: log.msg has been deprecated, create a python logger and log through it instead

log.msg

原来官方以及不推荐使用log.msg了

最新的用法:

# -*- coding: utf-8 -*-

import scrapy

from scrapy_splash import SplashRequest

import logging

# from scrapy import log

class BiliSpider(scrapy.Spider):

name = 'ordinary' # 这个名字就是上面连接中那个启动应用的名字

allowed_domain = ["bilibili.com"]

start_urls = [

"https://www.bilibili.com/"

]

def parse(self, response):

logging.info('====================================================')

content = response.xpath("//div[@class='num-wrap']").extract_first()

logging.info(content)

logging.info('====================================================')

最新版的chrome中info lite居然不支持了

李魔佛 发表了文章 • 0 个评论 • 3036 次浏览 • 2018-06-25 18:58

只好降级......

版本 65.0.3325.162(正式版本) (64 位)

这个版本最新且支持info lite的。

只好降级......

版本 65.0.3325.162(正式版本) (64 位)

这个版本最新且支持info lite的。

Message: invalid selector: Compound class names not permitted

李魔佛 发表了文章 • 0 个评论 • 3711 次浏览 • 2018-01-30 00:59

driver.find_element_by_class_name("content")

使用class名字来查找元素的话,就会出现

Message: invalid selector: Compound class names not permitted

这个错误。

比如京东的登录页面中:

<div id="content">

<div class="login-wrap">

<div class="w">

<div class="login-form">

<div class="login-tab login-tab-l">

<a href="javascript:void(0)" clstag="pageclick|keycount|201607144|1"> 扫码登录</a>

</div>

<div class="login-tab login-tab-r">

<a href="javascript:void(0)" clstag="pageclick|keycount|201607144|2">账户登录</a>

</div>

<div class="login-box">

<div class="mt tab-h">

</div>

<div class="msg-wrap">

<div class="msg-error hide"><b></b></div>

</div>

我要找的是<div class="login-tab login-tab-l">

那么应该使用css选择器:

browser.find_element_by_css_selector('div.login-tab.login-tab-r')

查看全部

driver.find_element_by_class_name("content")

使用class名字来查找元素的话,就会出现

Message: invalid selector: Compound class names not permitted

这个错误。

比如京东的登录页面中:

<div id="content">

<div class="login-wrap">

<div class="w">

<div class="login-form">

<div class="login-tab login-tab-l">

<a href="javascript:void(0)" clstag="pageclick|keycount|201607144|1"> 扫码登录</a>

</div>

<div class="login-tab login-tab-r">

<a href="javascript:void(0)" clstag="pageclick|keycount|201607144|2">账户登录</a>

</div>

<div class="login-box">

<div class="mt tab-h">

</div>

<div class="msg-wrap">

<div class="msg-error hide"><b></b></div>

</div>

我要找的是<div class="login-tab login-tab-l">

那么应该使用css选择器:

browser.find_element_by_css_selector('div.login-tab.login-tab-r')

python模拟登录vexx.pro 获取你的总资产/币值和其他个人信息

李魔佛 发表了文章 • 0 个评论 • 3697 次浏览 • 2018-01-10 03:22

# -*-coding=utf-8-*-

import requests

session = requests.Session()

user = ''

password = ''

def getCode():

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.94 Safari/537.36'}

url = 'http://vexx.pro/verify/code.html'

s = session.get(url=url, headers=headers)

with open('code.png', 'wb') as f:

f.write(s.content)

code = raw_input('input the code: ')

print 'code is ', code

login_url = 'http://vexx.pro/login/up_login.html'

post_data = {

'moble': user,

'mobles': '+86',

'password': password,

'verify': code,

'login_token': ''}

login_s = session.post(url=login_url, headers=header, data=post_data)

print login_s.status_code

zzc_url = 'http://vexx.pro/ajax/check_zzc/'

zzc_s = session.get(url=zzc_url, headers=headers)

print zzc_s.text

def main():

getCode()

if __name__ == '__main__':

main()

把自己的用户名和密码填上去,中途输入一次验证码。

可以把session保存到本地,然后下一次就可以不用再输入密码。

后记: 经过几个月后,这个网站被证实是一个圈钱跑路的网站,目前已经无法正常登陆了。希望大家不要再上当了

原创地址:http://30daydo.com/article/263

转载请注明出处。 查看全部

# -*-coding=utf-8-*-

import requests

session = requests.Session()

user = ''

password = ''

def getCode():

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.94 Safari/537.36'}

url = 'http://vexx.pro/verify/code.html'

s = session.get(url=url, headers=headers)

with open('code.png', 'wb') as f:

f.write(s.content)

code = raw_input('input the code: ')

print 'code is ', code

login_url = 'http://vexx.pro/login/up_login.html'

post_data = {

'moble': user,

'mobles': '+86',

'password': password,

'verify': code,

'login_token': ''}

login_s = session.post(url=login_url, headers=header, data=post_data)

print login_s.status_code

zzc_url = 'http://vexx.pro/ajax/check_zzc/'

zzc_s = session.get(url=zzc_url, headers=headers)

print zzc_s.text

def main():

getCode()

if __name__ == '__main__':

main()

把自己的用户名和密码填上去,中途输入一次验证码。

可以把session保存到本地,然后下一次就可以不用再输入密码。

后记: 经过几个月后,这个网站被证实是一个圈钱跑路的网站,目前已经无法正常登陆了。希望大家不要再上当了

原创地址:http://30daydo.com/article/263

转载请注明出处。

[scrapy]修改爬虫默认user agent的多种方法

李魔佛 发表了文章 • 0 个评论 • 9947 次浏览 • 2017-12-14 16:22

3. 目标站点:

https://helloacm.com/api/user-agent/

这一个站点直接返回用户的User-Agent, 这样你就可以直接查看你的User-Agent是否设置成功。

尝试用浏览器打开网址

https://helloacm.com/api/user-agent/,

网站直接返回:

"Mozilla\/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit\/537.36 (KHTML, like Gecko) Chrome\/62.0.3202.94 Safari\/537.36"

3. 配置scrapy

在spider文件夹的headervalidation.py 修改为一下内容。class HeadervalidationSpider(scrapy.Spider):

name = 'headervalidation'

allowed_domains = ['helloacm.com']

start_urls = ['http://helloacm.com/api/user-agent/']

def parse(self, response):

print '*'*20

print response.body

print '*'*20

项目只是打印出response的body,也就是打印出访问的User-Agent信息。

运行:scrapy crawl headervalidation会发现返回的是503。 接下来,我们修改scrapy的User-Agent

方法1:

修改setting.py中的User-Agent# Crawl responsibly by identifying yourself (and your website) on the user-agent

USER_AGENT = 'Hello World'

然后重新运行scrapy crawl headervalidation

这个时候,能够看到正常的scrapy输出了。2017-12-14 16:17:35 [scrapy.extensions.telnet] DEBUG: Telnet console listening on 127.0.0.1:6023

2017-12-14 16:17:35 [scrapy.downloadermiddlewares.redirect] DEBUG: Redirecting (301) to <GET https://helloacm.com/api/us