通知设置 新通知

python爬虫 模拟登陆知乎 推送知乎文章到kindle电子书 获取自己的关注问题

低调的哥哥 发表了文章 • 0 个评论 • 39489 次浏览 • 2016-05-12 17:53

平时逛知乎,上班的时候看到一些好的答案,不过由于答案太长,没来得及看完,所以自己写了个python脚本,把自己想要的答案抓取下来,并且推送到kindle上,下班后用kindle再慢慢看。 平时喜欢的内容也可以整理成电子书抓取下来,等周末闲时看。

#2016-08-19更新:

添加了模拟登陆知乎的模块,自动获取自己的关注的问题id,然后把这些问题的所有答案抓取下来推送到kindle

# -*-coding=utf-8-*-

__author__ = 'Rocky'

# -*-coding=utf-8-*-

from email.mime.text import MIMEText

from email.mime.multipart import MIMEMultipart

import smtplib

from email import Encoders, Utils

import urllib2

import time

import re

import sys

import os

from bs4 import BeautifulSoup

from email.Header import Header

reload(sys)

sys.setdefaultencoding('utf-8')

class GetContent():

def __init__(self, id):

# 给出的第一个参数 就是你要下载的问题的id

# 比如 想要下载的问题链接是 https://www.zhihu.com/question/29372574

# 那么 就输入 python zhihu.py 29372574

id_link = "/question/" + id

self.getAnswer(id_link)

def save2file(self, filename, content):

# 保存为电子书文件

filename = filename + ".txt"

f = open(filename, 'a')

f.write(content)

f.close()

def getAnswer(self, answerID):

host = "http://www.zhihu.com"

url = host + answerID

print url

user_agent = "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Trident/5.0)"

# 构造header 伪装一下

header = {"User-Agent": user_agent}

req = urllib2.Request(url, headers=header)

try:

resp = urllib2.urlopen(req)

except:

print "Time out. Retry"

time.sleep(30)

# try to switch with proxy ip

resp = urllib2.urlopen(req)

# 这里已经获取了 网页的代码,接下来就是提取你想要的内容。 使用beautifulSoup 来处理,很方便

try:

bs = BeautifulSoup(resp)

except:

print "Beautifulsoup error"

return None

title = bs.title

# 获取的标题

filename_old = title.string.strip()

print filename_old

filename = re.sub('[\/:*?"<>|]', '-', filename_old)

# 用来保存内容的文件名,因为文件名不能有一些特殊符号,所以使用正则表达式过滤掉

self.save2file(filename, title.string)

detail = bs.find("div", class_="zm-editable-content")

self.save2file(filename, "\n\n\n\n--------------------Detail----------------------\n\n")

# 获取问题的补充内容

if detail is not None:

for i in detail.strings:

self.save2file(filename, unicode(i))

answer = bs.find_all("div", class_="zm-editable-content clearfix")

k = 0

index = 0

for each_answer in answer:

self.save2file(filename, "\n\n-------------------------answer %s via -------------------------\n\n" % k)

for a in each_answer.strings:

# 循环获取每一个答案的内容,然后保存到文件中

self.save2file(filename, unicode(a))

k += 1

index = index + 1

smtp_server = 'smtp.126.com'

from_mail = 'your@126.com'

password = 'yourpassword'

to_mail = 'yourname@kindle.cn'

# send_kindle=MailAtt(smtp_server,from_mail,password,to_mail)

# send_kindle.send_txt(filename)

# 调用发送邮件函数,把电子书发送到你的kindle用户的邮箱账号,这样你的kindle就可以收到电子书啦

print filename

class MailAtt():

def __init__(self, smtp_server, from_mail, password, to_mail):

self.server = smtp_server

self.username = from_mail.split("@")[0]

self.from_mail = from_mail

self.password = password

self.to_mail = to_mail

# 初始化邮箱设置

def send_txt(self, filename):

# 这里发送附件尤其要注意字符编码,当时调试了挺久的,因为收到的文件总是乱码

self.smtp = smtplib.SMTP()

self.smtp.connect(self.server)

self.smtp.login(self.username, self.password)

self.msg = MIMEMultipart()

self.msg['to'] = self.to_mail

self.msg['from'] = self.from_mail

self.msg['Subject'] = "Convert"

self.filename = filename + ".txt"

self.msg['Date'] = Utils.formatdate(localtime=1)

content = open(self.filename.decode('utf-8'), 'rb').read()

# print content

self.att = MIMEText(content, 'base64', 'utf-8')

self.att['Content-Type'] = 'application/octet-stream'

# self.att["Content-Disposition"] = "attachment;filename=\"%s\"" %(self.filename.encode('gb2312'))

self.att["Content-Disposition"] = "attachment;filename=\"%s\"" % Header(self.filename, 'gb2312')

# print self.att["Content-Disposition"]

self.msg.attach(self.att)

self.smtp.sendmail(self.msg['from'], self.msg['to'], self.msg.as_string())

self.smtp.quit()

if __name__ == "__main__":

sub_folder = os.path.join(os.getcwd(), "content")

# 专门用于存放下载的电子书的目录

if not os.path.exists(sub_folder):

os.mkdir(sub_folder)

os.chdir(sub_folder)

id = sys.argv[1]

# 给出的第一个参数 就是你要下载的问题的id

# 比如 想要下载的问题链接是 https://www.zhihu.com/question/29372574

# 那么 就输入 python zhihu.py 29372574

# id_link="/question/"+id

obj = GetContent(id)

# obj.getAnswer(id_link)

# 调用获取函数

print "Done"

#######################################

2016.8.19 更新

添加了新功能,模拟知乎登陆,自动获取自己关注的答案,制作成电子书并且发送到kindle

# -*-coding=utf-8-*-

__author__ = 'Rocky'

import requests

import cookielib

import re

import json

import time

import os

from getContent import GetContent

agent='Mozilla/5.0 (Windows NT 5.1; rv:33.0) Gecko/20100101 Firefox/33.0'

headers={'Host':'www.zhihu.com',

'Referer':'https://www.zhihu.com',

'User-Agent':agent}

#全局变量

session=requests.session()

session.cookies=cookielib.LWPCookieJar(filename="cookies")

try:

session.cookies.load(ignore_discard=True)

except:

print "Cookie can't load"

def isLogin():

url='https://www.zhihu.com/settings/profile'

login_code=session.get(url,headers=headers,allow_redirects=False).status_code

print login_code

if login_code == 200:

return True

else:

return False

def get_xsrf():

url='http://www.zhihu.com'

r=session.get(url,headers=headers,allow_redirects=False)

txt=r.text

result=re.findall(r'<input type=\"hidden\" name=\"_xsrf\" value=\"(\w+)\"/>',txt)[0]

return result

def getCaptcha():

#r=1471341285051

r=(time.time()*1000)

url='http://www.zhihu.com/captcha.gif?r='+str(r)+'&type=login'

image=session.get(url,headers=headers)

f=open("photo.jpg",'wb')

f.write(image.content)

f.close()

def Login():

xsrf=get_xsrf()

print xsrf

print len(xsrf)

login_url='http://www.zhihu.com/login/email'

data={

'_xsrf':xsrf,

'password':'*',

'remember_me':'true',

'email':'*'

}

try:

content=session.post(login_url,data=data,headers=headers)

login_code=content.text

print content.status_code

#this line important ! if no status, if will fail and execute the except part

#print content.status

if content.status_code != requests.codes.ok:

print "Need to verification code !"

getCaptcha()

#print "Please input the code of the captcha"

code=raw_input("Please input the code of the captcha")

data['captcha']=code

content=session.post(login_url,data=data,headers=headers)

print content.status_code

if content.status_code==requests.codes.ok:

print "Login successful"

session.cookies.save()

#print login_code

else:

session.cookies.save()

except:

print "Error in login"

return False

def focus_question():

focus_id=

url='https://www.zhihu.com/question/following'

content=session.get(url,headers=headers)

print content

p=re.compile(r'<a class="question_link" href="/question/(\d+)" target="_blank" data-id')

id_list=p.findall(content.text)

pattern=re.compile(r'<input type=\"hidden\" name=\"_xsrf\" value=\"(\w+)\"/>')

result=re.findall(pattern,content.text)[0]

print result

for i in id_list:

print i

focus_id.append(i)

url_next='https://www.zhihu.com/node/ProfileFollowedQuestionsV2'

page=20

offset=20

end_page=500

xsrf=re.findall(r'<input type=\"hidden\" name=\"_xsrf\" value=\"(\w+)\"',content.text)[0]

while offset < end_page:

#para='{"offset":20}'

#print para

print "page: %d" %offset

params={"offset":offset}

params_json=json.dumps(params)

data={

'method':'next',

'params':params_json,

'_xsrf':xsrf

}

#注意上面那里 post的data需要一个xsrf的字段,不然会返回403 的错误,这个在抓包的过程中一直都没有看到提交到xsrf,所以自己摸索出来的

offset=offset+page

headers_l={

'Host':'www.zhihu.com',

'Referer':'https://www.zhihu.com/question/following',

'User-Agent':agent,

'Origin':'https://www.zhihu.com',

'X-Requested-With':'XMLHttpRequest'

}

try:

s=session.post(url_next,data=data,headers=headers_l)

#print s.status_code

#print s.text

msgs=json.loads(s.text)

msg=msgs['msg']

for i in msg:

id_sub=re.findall(p,i)

for j in id_sub:

print j

id_list.append(j)

except:

print "Getting Error "

return id_list

def main():

if isLogin():

print "Has login"

else:

print "Need to login"

Login()

list_id=focus_question()

for i in list_id:

print i

obj=GetContent(i)

#getCaptcha()

if __name__=='__main__':

sub_folder=os.path.join(os.getcwd(),"content")

#专门用于存放下载的电子书的目录

if not os.path.exists(sub_folder):

os.mkdir(sub_folder)

os.chdir(sub_folder)

main()

完整代码请猛击这里:

github: https://github.com/Rockyzsu/zhihuToKindle

查看全部

#2016-08-19更新:

添加了模拟登陆知乎的模块,自动获取自己的关注的问题id,然后把这些问题的所有答案抓取下来推送到kindle

# -*-coding=utf-8-*-

__author__ = 'Rocky'

# -*-coding=utf-8-*-

from email.mime.text import MIMEText

from email.mime.multipart import MIMEMultipart

import smtplib

from email import Encoders, Utils

import urllib2

import time

import re

import sys

import os

from bs4 import BeautifulSoup

from email.Header import Header

reload(sys)

sys.setdefaultencoding('utf-8')

class GetContent():

def __init__(self, id):

# 给出的第一个参数 就是你要下载的问题的id

# 比如 想要下载的问题链接是 https://www.zhihu.com/question/29372574

# 那么 就输入 python zhihu.py 29372574

id_link = "/question/" + id

self.getAnswer(id_link)

def save2file(self, filename, content):

# 保存为电子书文件

filename = filename + ".txt"

f = open(filename, 'a')

f.write(content)

f.close()

def getAnswer(self, answerID):

host = "http://www.zhihu.com"

url = host + answerID

print url

user_agent = "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Trident/5.0)"

# 构造header 伪装一下

header = {"User-Agent": user_agent}

req = urllib2.Request(url, headers=header)

try:

resp = urllib2.urlopen(req)

except:

print "Time out. Retry"

time.sleep(30)

# try to switch with proxy ip

resp = urllib2.urlopen(req)

# 这里已经获取了 网页的代码,接下来就是提取你想要的内容。 使用beautifulSoup 来处理,很方便

try:

bs = BeautifulSoup(resp)

except:

print "Beautifulsoup error"

return None

title = bs.title

# 获取的标题

filename_old = title.string.strip()

print filename_old

filename = re.sub('[\/:*?"<>|]', '-', filename_old)

# 用来保存内容的文件名,因为文件名不能有一些特殊符号,所以使用正则表达式过滤掉

self.save2file(filename, title.string)

detail = bs.find("div", class_="zm-editable-content")

self.save2file(filename, "\n\n\n\n--------------------Detail----------------------\n\n")

# 获取问题的补充内容

if detail is not None:

for i in detail.strings:

self.save2file(filename, unicode(i))

answer = bs.find_all("div", class_="zm-editable-content clearfix")

k = 0

index = 0

for each_answer in answer:

self.save2file(filename, "\n\n-------------------------answer %s via -------------------------\n\n" % k)

for a in each_answer.strings:

# 循环获取每一个答案的内容,然后保存到文件中

self.save2file(filename, unicode(a))

k += 1

index = index + 1

smtp_server = 'smtp.126.com'

from_mail = 'your@126.com'

password = 'yourpassword'

to_mail = 'yourname@kindle.cn'

# send_kindle=MailAtt(smtp_server,from_mail,password,to_mail)

# send_kindle.send_txt(filename)

# 调用发送邮件函数,把电子书发送到你的kindle用户的邮箱账号,这样你的kindle就可以收到电子书啦

print filename

class MailAtt():

def __init__(self, smtp_server, from_mail, password, to_mail):

self.server = smtp_server

self.username = from_mail.split("@")[0]

self.from_mail = from_mail

self.password = password

self.to_mail = to_mail

# 初始化邮箱设置

def send_txt(self, filename):

# 这里发送附件尤其要注意字符编码,当时调试了挺久的,因为收到的文件总是乱码

self.smtp = smtplib.SMTP()

self.smtp.connect(self.server)

self.smtp.login(self.username, self.password)

self.msg = MIMEMultipart()

self.msg['to'] = self.to_mail

self.msg['from'] = self.from_mail

self.msg['Subject'] = "Convert"

self.filename = filename + ".txt"

self.msg['Date'] = Utils.formatdate(localtime=1)

content = open(self.filename.decode('utf-8'), 'rb').read()

# print content

self.att = MIMEText(content, 'base64', 'utf-8')

self.att['Content-Type'] = 'application/octet-stream'

# self.att["Content-Disposition"] = "attachment;filename=\"%s\"" %(self.filename.encode('gb2312'))

self.att["Content-Disposition"] = "attachment;filename=\"%s\"" % Header(self.filename, 'gb2312')

# print self.att["Content-Disposition"]

self.msg.attach(self.att)

self.smtp.sendmail(self.msg['from'], self.msg['to'], self.msg.as_string())

self.smtp.quit()

if __name__ == "__main__":

sub_folder = os.path.join(os.getcwd(), "content")

# 专门用于存放下载的电子书的目录

if not os.path.exists(sub_folder):

os.mkdir(sub_folder)

os.chdir(sub_folder)

id = sys.argv[1]

# 给出的第一个参数 就是你要下载的问题的id

# 比如 想要下载的问题链接是 https://www.zhihu.com/question/29372574

# 那么 就输入 python zhihu.py 29372574

# id_link="/question/"+id

obj = GetContent(id)

# obj.getAnswer(id_link)

# 调用获取函数

print "Done"

#######################################

2016.8.19 更新

添加了新功能,模拟知乎登陆,自动获取自己关注的答案,制作成电子书并且发送到kindle

# -*-coding=utf-8-*-

__author__ = 'Rocky'

import requests

import cookielib

import re

import json

import time

import os

from getContent import GetContent

agent='Mozilla/5.0 (Windows NT 5.1; rv:33.0) Gecko/20100101 Firefox/33.0'

headers={'Host':'www.zhihu.com',

'Referer':'https://www.zhihu.com',

'User-Agent':agent}

#全局变量

session=requests.session()

session.cookies=cookielib.LWPCookieJar(filename="cookies")

try:

session.cookies.load(ignore_discard=True)

except:

print "Cookie can't load"

def isLogin():

url='https://www.zhihu.com/settings/profile'

login_code=session.get(url,headers=headers,allow_redirects=False).status_code

print login_code

if login_code == 200:

return True

else:

return False

def get_xsrf():

url='http://www.zhihu.com'

r=session.get(url,headers=headers,allow_redirects=False)

txt=r.text

result=re.findall(r'<input type=\"hidden\" name=\"_xsrf\" value=\"(\w+)\"/>',txt)[0]

return result

def getCaptcha():

#r=1471341285051

r=(time.time()*1000)

url='http://www.zhihu.com/captcha.gif?r='+str(r)+'&type=login'

image=session.get(url,headers=headers)

f=open("photo.jpg",'wb')

f.write(image.content)

f.close()

def Login():

xsrf=get_xsrf()

print xsrf

print len(xsrf)

login_url='http://www.zhihu.com/login/email'

data={

'_xsrf':xsrf,

'password':'*',

'remember_me':'true',

'email':'*'

}

try:

content=session.post(login_url,data=data,headers=headers)

login_code=content.text

print content.status_code

#this line important ! if no status, if will fail and execute the except part

#print content.status

if content.status_code != requests.codes.ok:

print "Need to verification code !"

getCaptcha()

#print "Please input the code of the captcha"

code=raw_input("Please input the code of the captcha")

data['captcha']=code

content=session.post(login_url,data=data,headers=headers)

print content.status_code

if content.status_code==requests.codes.ok:

print "Login successful"

session.cookies.save()

#print login_code

else:

session.cookies.save()

except:

print "Error in login"

return False

def focus_question():

focus_id=

url='https://www.zhihu.com/question/following'

content=session.get(url,headers=headers)

print content

p=re.compile(r'<a class="question_link" href="/question/(\d+)" target="_blank" data-id')

id_list=p.findall(content.text)

pattern=re.compile(r'<input type=\"hidden\" name=\"_xsrf\" value=\"(\w+)\"/>')

result=re.findall(pattern,content.text)[0]

print result

for i in id_list:

print i

focus_id.append(i)

url_next='https://www.zhihu.com/node/ProfileFollowedQuestionsV2'

page=20

offset=20

end_page=500

xsrf=re.findall(r'<input type=\"hidden\" name=\"_xsrf\" value=\"(\w+)\"',content.text)[0]

while offset < end_page:

#para='{"offset":20}'

#print para

print "page: %d" %offset

params={"offset":offset}

params_json=json.dumps(params)

data={

'method':'next',

'params':params_json,

'_xsrf':xsrf

}

#注意上面那里 post的data需要一个xsrf的字段,不然会返回403 的错误,这个在抓包的过程中一直都没有看到提交到xsrf,所以自己摸索出来的

offset=offset+page

headers_l={

'Host':'www.zhihu.com',

'Referer':'https://www.zhihu.com/question/following',

'User-Agent':agent,

'Origin':'https://www.zhihu.com',

'X-Requested-With':'XMLHttpRequest'

}

try:

s=session.post(url_next,data=data,headers=headers_l)

#print s.status_code

#print s.text

msgs=json.loads(s.text)

msg=msgs['msg']

for i in msg:

id_sub=re.findall(p,i)

for j in id_sub:

print j

id_list.append(j)

except:

print "Getting Error "

return id_list

def main():

if isLogin():

print "Has login"

else:

print "Need to login"

Login()

list_id=focus_question()

for i in list_id:

print i

obj=GetContent(i)

#getCaptcha()

if __name__=='__main__':

sub_folder=os.path.join(os.getcwd(),"content")

#专门用于存放下载的电子书的目录

if not os.path.exists(sub_folder):

os.mkdir(sub_folder)

os.chdir(sub_folder)

main()

完整代码请猛击这里:

github: https://github.com/Rockyzsu/zhihuToKindle

查看全部

平时逛知乎,上班的时候看到一些好的答案,不过由于答案太长,没来得及看完,所以自己写了个python脚本,把自己想要的答案抓取下来,并且推送到kindle上,下班后用kindle再慢慢看。 平时喜欢的内容也可以整理成电子书抓取下来,等周末闲时看。

#2016-08-19更新:

添加了模拟登陆知乎的模块,自动获取自己的关注的问题id,然后把这些问题的所有答案抓取下来推送到kindle

#######################################

2016.8.19 更新

添加了新功能,模拟知乎登陆,自动获取自己关注的答案,制作成电子书并且发送到kindle

完整代码请猛击这里:

github: https://github.com/Rockyzsu/zhihuToKindle

#2016-08-19更新:

添加了模拟登陆知乎的模块,自动获取自己的关注的问题id,然后把这些问题的所有答案抓取下来推送到kindle

# -*-coding=utf-8-*-

__author__ = 'Rocky'

# -*-coding=utf-8-*-

from email.mime.text import MIMEText

from email.mime.multipart import MIMEMultipart

import smtplib

from email import Encoders, Utils

import urllib2

import time

import re

import sys

import os

from bs4 import BeautifulSoup

from email.Header import Header

reload(sys)

sys.setdefaultencoding('utf-8')

class GetContent():

def __init__(self, id):

# 给出的第一个参数 就是你要下载的问题的id

# 比如 想要下载的问题链接是 https://www.zhihu.com/question/29372574

# 那么 就输入 python zhihu.py 29372574

id_link = "/question/" + id

self.getAnswer(id_link)

def save2file(self, filename, content):

# 保存为电子书文件

filename = filename + ".txt"

f = open(filename, 'a')

f.write(content)

f.close()

def getAnswer(self, answerID):

host = "http://www.zhihu.com"

url = host + answerID

print url

user_agent = "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Trident/5.0)"

# 构造header 伪装一下

header = {"User-Agent": user_agent}

req = urllib2.Request(url, headers=header)

try:

resp = urllib2.urlopen(req)

except:

print "Time out. Retry"

time.sleep(30)

# try to switch with proxy ip

resp = urllib2.urlopen(req)

# 这里已经获取了 网页的代码,接下来就是提取你想要的内容。 使用beautifulSoup 来处理,很方便

try:

bs = BeautifulSoup(resp)

except:

print "Beautifulsoup error"

return None

title = bs.title

# 获取的标题

filename_old = title.string.strip()

print filename_old

filename = re.sub('[\/:*?"<>|]', '-', filename_old)

# 用来保存内容的文件名,因为文件名不能有一些特殊符号,所以使用正则表达式过滤掉

self.save2file(filename, title.string)

detail = bs.find("div", class_="zm-editable-content")

self.save2file(filename, "\n\n\n\n--------------------Detail----------------------\n\n")

# 获取问题的补充内容

if detail is not None:

for i in detail.strings:

self.save2file(filename, unicode(i))

answer = bs.find_all("div", class_="zm-editable-content clearfix")

k = 0

index = 0

for each_answer in answer:

self.save2file(filename, "\n\n-------------------------answer %s via -------------------------\n\n" % k)

for a in each_answer.strings:

# 循环获取每一个答案的内容,然后保存到文件中

self.save2file(filename, unicode(a))

k += 1

index = index + 1

smtp_server = 'smtp.126.com'

from_mail = 'your@126.com'

password = 'yourpassword'

to_mail = 'yourname@kindle.cn'

# send_kindle=MailAtt(smtp_server,from_mail,password,to_mail)

# send_kindle.send_txt(filename)

# 调用发送邮件函数,把电子书发送到你的kindle用户的邮箱账号,这样你的kindle就可以收到电子书啦

print filename

class MailAtt():

def __init__(self, smtp_server, from_mail, password, to_mail):

self.server = smtp_server

self.username = from_mail.split("@")[0]

self.from_mail = from_mail

self.password = password

self.to_mail = to_mail

# 初始化邮箱设置

def send_txt(self, filename):

# 这里发送附件尤其要注意字符编码,当时调试了挺久的,因为收到的文件总是乱码

self.smtp = smtplib.SMTP()

self.smtp.connect(self.server)

self.smtp.login(self.username, self.password)

self.msg = MIMEMultipart()

self.msg['to'] = self.to_mail

self.msg['from'] = self.from_mail

self.msg['Subject'] = "Convert"

self.filename = filename + ".txt"

self.msg['Date'] = Utils.formatdate(localtime=1)

content = open(self.filename.decode('utf-8'), 'rb').read()

# print content

self.att = MIMEText(content, 'base64', 'utf-8')

self.att['Content-Type'] = 'application/octet-stream'

# self.att["Content-Disposition"] = "attachment;filename=\"%s\"" %(self.filename.encode('gb2312'))

self.att["Content-Disposition"] = "attachment;filename=\"%s\"" % Header(self.filename, 'gb2312')

# print self.att["Content-Disposition"]

self.msg.attach(self.att)

self.smtp.sendmail(self.msg['from'], self.msg['to'], self.msg.as_string())

self.smtp.quit()

if __name__ == "__main__":

sub_folder = os.path.join(os.getcwd(), "content")

# 专门用于存放下载的电子书的目录

if not os.path.exists(sub_folder):

os.mkdir(sub_folder)

os.chdir(sub_folder)

id = sys.argv[1]

# 给出的第一个参数 就是你要下载的问题的id

# 比如 想要下载的问题链接是 https://www.zhihu.com/question/29372574

# 那么 就输入 python zhihu.py 29372574

# id_link="/question/"+id

obj = GetContent(id)

# obj.getAnswer(id_link)

# 调用获取函数

print "Done"

#######################################

2016.8.19 更新

添加了新功能,模拟知乎登陆,自动获取自己关注的答案,制作成电子书并且发送到kindle

# -*-coding=utf-8-*-

__author__ = 'Rocky'

import requests

import cookielib

import re

import json

import time

import os

from getContent import GetContent

agent='Mozilla/5.0 (Windows NT 5.1; rv:33.0) Gecko/20100101 Firefox/33.0'

headers={'Host':'www.zhihu.com',

'Referer':'https://www.zhihu.com',

'User-Agent':agent}

#全局变量

session=requests.session()

session.cookies=cookielib.LWPCookieJar(filename="cookies")

try:

session.cookies.load(ignore_discard=True)

except:

print "Cookie can't load"

def isLogin():

url='https://www.zhihu.com/settings/profile'

login_code=session.get(url,headers=headers,allow_redirects=False).status_code

print login_code

if login_code == 200:

return True

else:

return False

def get_xsrf():

url='http://www.zhihu.com'

r=session.get(url,headers=headers,allow_redirects=False)

txt=r.text

result=re.findall(r'<input type=\"hidden\" name=\"_xsrf\" value=\"(\w+)\"/>',txt)[0]

return result

def getCaptcha():

#r=1471341285051

r=(time.time()*1000)

url='http://www.zhihu.com/captcha.gif?r='+str(r)+'&type=login'

image=session.get(url,headers=headers)

f=open("photo.jpg",'wb')

f.write(image.content)

f.close()

def Login():

xsrf=get_xsrf()

print xsrf

print len(xsrf)

login_url='http://www.zhihu.com/login/email'

data={

'_xsrf':xsrf,

'password':'*',

'remember_me':'true',

'email':'*'

}

try:

content=session.post(login_url,data=data,headers=headers)

login_code=content.text

print content.status_code

#this line important ! if no status, if will fail and execute the except part

#print content.status

if content.status_code != requests.codes.ok:

print "Need to verification code !"

getCaptcha()

#print "Please input the code of the captcha"

code=raw_input("Please input the code of the captcha")

data['captcha']=code

content=session.post(login_url,data=data,headers=headers)

print content.status_code

if content.status_code==requests.codes.ok:

print "Login successful"

session.cookies.save()

#print login_code

else:

session.cookies.save()

except:

print "Error in login"

return False

def focus_question():

focus_id=

url='https://www.zhihu.com/question/following'

content=session.get(url,headers=headers)

print content

p=re.compile(r'<a class="question_link" href="/question/(\d+)" target="_blank" data-id')

id_list=p.findall(content.text)

pattern=re.compile(r'<input type=\"hidden\" name=\"_xsrf\" value=\"(\w+)\"/>')

result=re.findall(pattern,content.text)[0]

print result

for i in id_list:

print i

focus_id.append(i)

url_next='https://www.zhihu.com/node/ProfileFollowedQuestionsV2'

page=20

offset=20

end_page=500

xsrf=re.findall(r'<input type=\"hidden\" name=\"_xsrf\" value=\"(\w+)\"',content.text)[0]

while offset < end_page:

#para='{"offset":20}'

#print para

print "page: %d" %offset

params={"offset":offset}

params_json=json.dumps(params)

data={

'method':'next',

'params':params_json,

'_xsrf':xsrf

}

#注意上面那里 post的data需要一个xsrf的字段,不然会返回403 的错误,这个在抓包的过程中一直都没有看到提交到xsrf,所以自己摸索出来的

offset=offset+page

headers_l={

'Host':'www.zhihu.com',

'Referer':'https://www.zhihu.com/question/following',

'User-Agent':agent,

'Origin':'https://www.zhihu.com',

'X-Requested-With':'XMLHttpRequest'

}

try:

s=session.post(url_next,data=data,headers=headers_l)

#print s.status_code

#print s.text

msgs=json.loads(s.text)

msg=msgs['msg']

for i in msg:

id_sub=re.findall(p,i)

for j in id_sub:

print j

id_list.append(j)

except:

print "Getting Error "

return id_list

def main():

if isLogin():

print "Has login"

else:

print "Need to login"

Login()

list_id=focus_question()

for i in list_id:

print i

obj=GetContent(i)

#getCaptcha()

if __name__=='__main__':

sub_folder=os.path.join(os.getcwd(),"content")

#专门用于存放下载的电子书的目录

if not os.path.exists(sub_folder):

os.mkdir(sub_folder)

os.chdir(sub_folder)

main()

完整代码请猛击这里:

github: https://github.com/Rockyzsu/zhihuToKindle

Firefox抓包分析 (拉勾网抓包分析)

低调的哥哥 发表了文章 • 16 个评论 • 20498 次浏览 • 2016-05-09 18:30

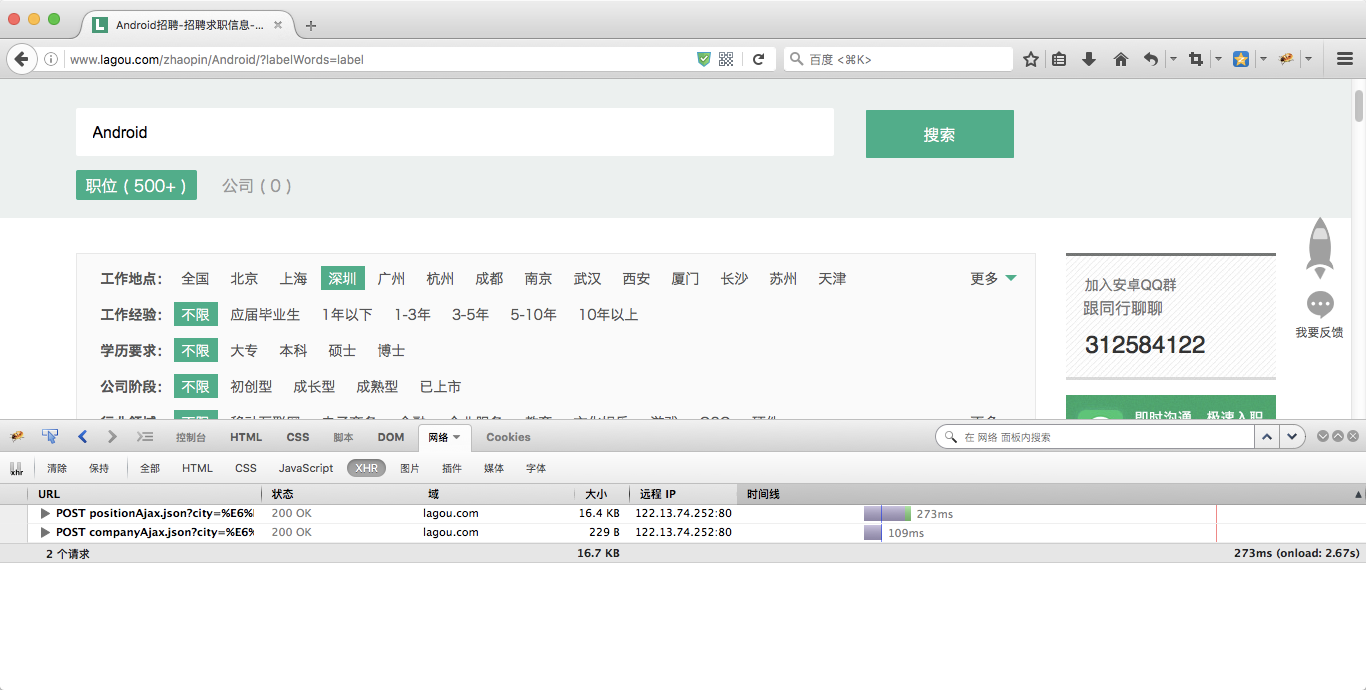

针对一些JS网页,动态网页在源码中无法看到它的内容,可以通过抓包分析出其JSON格式的数据。 网页通过通过这些JSON数据对网页的内容进行填充,然后就看到网页里显示相关的内容了。

使用过chrome,firefox,wireshark来抓过包,比较方便的是chrome,不需要安装第三方的其它插件,不过打开新页面的时候又要重新开一个捕捉页面,会错过一些实时的数据。

wireshark需要专门掌握它自己的过滤规则,学习成本摆在那里。

最好用的还是firefox+firebug第三方插件。

接下来以拉勾网为例。

打开firebug功能

www.lagou.com 在左侧栏随便点击一个岗位,以android为例

在firebug中,需要点击“网络”选项卡,然后选择XHR。

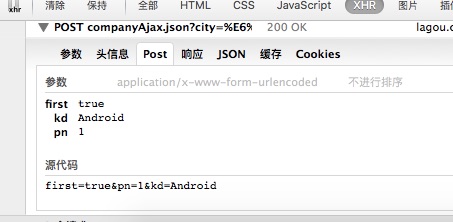

Post的信息就是我们需要关注的,点击post的链接

点击了Android之后 我们从浏览器上传了几个参数到拉勾的服务器

一个是 first =true, 一个是kd = android, (关键字) 一个是pn =1 (page number 页码)

所以我们就可以模仿这一个步骤来构造一个数据包来模拟用户的点击动作。post_data = {'first':'true','kd':'Android','pn':'1'}

然后使用python中库中最简单的requests库来提交数据。 而这些数据 正是抓包里看到的数据。import requests

url = "http://www.lagou.com/jobs/posi ... ot%3B

return_data=requests.post(url,data=post_data)

print return_data.text

呐,打印出来的数据就是返回来的json数据{"code":0,"success":true,"requestId":null,"resubmitToken":null,"msg":null,"content":{"pageNo":1,"pageSize":15,"positionResult":{"totalCount":5000,"pageSize":15,"locationInfo":{"city":null,"district":null,"businessZone":null},"result":[{"createTime":"2016-05-05 17:27:50","companyId":50889,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"和创(北京)科技股份有限公司","city":"北京","salary":"20k-35k","financeStage":"上市公司","positionId":1455217,"companyLogo":"i/image/M00/03/44/Cgp3O1ax7JWAOSzUAABS3OF0A7w289.jpg","positionFirstType":"技术","companyName":"和创科技(红圈营销)","positionAdvantage":"上市公司,持续股权激励政策,技术极客云集","industryField":"移动互联网 · 企业服务","score":1372,"district":"西城区","companyLabelList":["弹性工作","敏捷研发","股票期权","年底双薪"],"deliverCount":13,"leaderName":"刘学臣","companySize":"2000人以上","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462440470000,"positonTypesMap":null,"hrScore":77,"flowScore":148,"showCount":6627,"pvScore":73.2258060280967,"plus":"是","businessZones":["新街口","德胜门","小西天"],"publisherId":994817,"loginTime":1462876049000,"appShow":3141,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":99,"adWord":1,"formatCreateTime":"2016-05-05","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-05 18:30:16","companyId":50889,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"和创(北京)科技股份有限公司","city":"北京","salary":"20k-35k","financeStage":"上市公司","positionId":1440576,"companyLogo":"i/image/M00/03/44/Cgp3O1ax7JWAOSzUAABS3OF0A7w289.jpg","positionFirstType":"技术","companyName":"和创科技(红圈营销)","positionAdvantage":"上市公司,持续股权激励政策,技术爆棚!","industryField":"移动互联网 · 企业服务","score":1372,"district":"海淀区","companyLabelList":["弹性工作","敏捷研发","股票期权","年底双薪"],"deliverCount":6,"leaderName":"刘学臣","companySize":"2000人以上","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462444216000,"positonTypesMap":null,"hrScore":77,"flowScore":148,"showCount":3214,"pvScore":73.37271526202157,"plus":"是","businessZones":["双榆树","中关村","大钟寺"],"publisherId":994817,"loginTime":1462876049000,"appShow":1782,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":99,"adWord":1,"formatCreateTime":"2016-05-05","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 18:41:29","companyId":94307,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"宁波海大物联科技有限公司","city":"宁波","salary":"8k-15k","financeStage":"成长型(A轮)","positionId":1070249,"companyLogo":"image2/M00/03/32/CgqLKVXtWiuAUbXgAAB1g_5FW3Y484.png?cc=0.6152940313331783","positionFirstType":"技术","companyName":"海大物联","positionAdvantage":"一流的技术团队,丰厚的薪资回报。","industryField":"移动互联网 · 企业服务","score":1353,"district":"鄞州区","companyLabelList":["节日礼物","年底双薪","带薪年假","年度旅游"],"deliverCount":0,"leaderName":"暂没有填写","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462876889000,"positonTypesMap":null,"hrScore":75,"flowScore":167,"showCount":1031,"pvScore":47.6349840620252,"plus":"是","businessZones":null,"publisherId":2494230,"loginTime":1462885305000,"appShow":184,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":63,"adWord":0,"formatCreateTime":"18:41发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 17:57:43","companyId":89004,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"学历不限","jobNature":"全职","companyShortName":"温州康宁医院股份有限公司","city":"杭州","salary":"10k-20k","financeStage":"上市公司","positionId":1387825,"companyLogo":"i/image/M00/02/C2/CgqKkVabp--APWTjAACHHJJxyPc207.png","positionFirstType":"技术","companyName":"的的心理","positionAdvantage":"上市公司内部创业项目。优质福利待遇+期权","industryField":"移动互联网 · 医疗健康","score":1344,"district":"江干区","companyLabelList":["年底双薪","股票期权","带薪年假","招募合伙人"],"deliverCount":5,"leaderName":"杨怡","companySize":"500-2000人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462874263000,"positonTypesMap":null,"hrScore":74,"flowScore":153,"showCount":1312,"pvScore":66.87818057124453,"plus":"是","businessZones":["四季青","景芳"],"publisherId":3655492,"loginTime":1462873104000,"appShow":573,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"17:57发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 13:49:47","companyId":15071,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"杭州短趣网络传媒技术有限公司","city":"杭州","salary":"10k-20k","financeStage":"成长型(A轮)","positionId":1803257,"companyLogo":"image2/M00/0B/80/CgpzWlYYse2AJgc0AABG9iSEWAE052.jpg","positionFirstType":"技术","companyName":"短趣网","positionAdvantage":"高额项目奖金,行业内有竞争力的薪资水平","industryField":"移动互联网 · 社交网络","score":1343,"district":null,"companyLabelList":["绩效奖金","年终分红","五险一金","通讯津贴"],"deliverCount":1,"leaderName":"王强宇","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":28,"imstate":"today","createTimeSort":1462859387000,"positonTypesMap":null,"hrScore":68,"flowScore":178,"showCount":652,"pvScore":32.82081357576065,"plus":"否","businessZones":null,"publisherId":4362468,"loginTime":1462870318000,"appShow":0,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"13:49发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 13:55:08","companyId":28422,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"成都品果科技有限公司","city":"北京","salary":"18k-30k","financeStage":"成长型(B轮)","positionId":290875,"companyLogo":"i/image/M00/02/F3/Cgp3O1ah7FuAbSnkAACMlcPiWXk393.png","positionFirstType":"技术","companyName":"camera360","positionAdvantage":"高大上的福利待遇、发展前景等着你哦!","industryField":"移动互联网","score":1339,"district":"海淀区","companyLabelList":["年终分红","绩效奖金","年底双薪","五险一金"],"deliverCount":6,"leaderName":"徐灏","companySize":"15-50人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":0,"imstate":"disabled","createTimeSort":1462859708000,"positonTypesMap":null,"hrScore":80,"flowScore":188,"showCount":1199,"pvScore":19.745453118211834,"plus":"是","businessZones":["中关村","北京大学","苏州街"],"publisherId":389753,"loginTime":1462866640000,"appShow":0,"calcScore":false,"showOrder":1433137697136,"haveDeliver":false,"orderBy":71,"adWord":0,"formatCreateTime":"13:55发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 17:57:55","companyId":89004,"positionName":"Android","positionType":"移动开发","workYear":"不限","education":"学历不限","jobNature":"全职","companyShortName":"温州康宁医院股份有限公司","city":"杭州","salary":"10k-20k","financeStage":"上市公司","positionId":1410975,"companyLogo":"i/image/M00/02/C2/CgqKkVabp--APWTjAACHHJJxyPc207.png","positionFirstType":"技术","companyName":"的的心理","positionAdvantage":"上市公司内部创业团队","industryField":"移动互联网 · 医疗健康","score":1335,"district":null,"companyLabelList":["年底双薪","股票期权","带薪年假","招募合伙人"],"deliverCount":9,"leaderName":"杨怡","companySize":"500-2000人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462874275000,"positonTypesMap":null,"hrScore":74,"flowScore":144,"showCount":2085,"pvScore":77.9570832081189,"plus":"是","businessZones":null,"publisherId":3655492,"loginTime":1462873104000,"appShow":711,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"17:57发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-09 09:46:32","companyId":113895,"positionName":"Android开发工程师","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"北京互动金服科技有限公司","city":"北京","salary":"15k-25k","financeStage":"成长型(不需要融资)","positionId":1473342,"companyLogo":"i/image/M00/03/D8/CgqKkVbEA_uAe1k4AAHTfy3RxPY812.jpg","positionFirstType":"技术","companyName":"互动科技","positionAdvantage":"五险一金 补充医疗 年终奖 福利津贴","industryField":"移动互联网 · O2O","score":1326,"district":"海淀区","companyLabelList":,"deliverCount":32,"leaderName":"暂没有填写","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":980,"adjustScore":48,"imstate":"today","createTimeSort":1462758392000,"positonTypesMap":null,"hrScore":82,"flowScore":153,"showCount":3741,"pvScore":67.01698353391613,"plus":"是","businessZones":["白石桥","魏公村","万寿寺","白石桥","魏公村","万寿寺"],"publisherId":3814477,"loginTime":1462874842000,"appShow":0,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":63,"adWord":0,"formatCreateTime":"1天前发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 16:50:51","companyId":23999,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"本科","jobNature":"全职","companyShortName":"南京智鹤电子科技有限公司","city":"长沙","salary":"8k-12k","financeStage":"成长型(A轮)","positionId":1804917,"companyLogo":"image1/M00/35/EB/CgYXBlWc5KGAVeL8AAAOi4lPhWU502.jpg","positionFirstType":"技术","companyName":"智鹤科技","positionAdvantage":"弹性工作制 技术氛围浓厚","industryField":"移动互联网","score":1322,"district":null,"companyLabelList":["股票期权","绩效奖金","专项奖金","年终分红"],"deliverCount":1,"leaderName":"暂没有填写","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":0,"imstate":"disabled","createTimeSort":1462870251000,"positonTypesMap":null,"hrScore":62,"flowScore":191,"showCount":283,"pvScore":15.939035855045429,"plus":"否","businessZones":null,"publisherId":282621,"loginTime":1462869967000,"appShow":0,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"16:50发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-08 23:12:56","companyId":24287,"positionName":"android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"杭州腾展科技有限公司","city":"杭州","salary":"15k-22k","financeStage":"成熟型(D轮及以上)","positionId":1197868,"companyLogo":"image1/M00/0B/7D/Cgo8PFTzIBOAEd2dAACMq9tQoMA797.png","positionFirstType":"技术","companyName":"腾展叮咚(Dingtone)","positionAdvantage":"每半年调整薪资,今年上市!","industryField":"移动互联网 · 社交网络","score":1322,"district":"西湖区","companyLabelList":["出国旅游","股票期权","精英团队","强悍的创始人"],"deliverCount":9,"leaderName":"魏松祥(Steve Wei)","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"disabled","createTimeSort":1462720376000,"positonTypesMap":null,"hrScore":71,"flowScore":137,"showCount":3786,"pvScore":87.51582865460942,"plus":"是","businessZones":["文三路","古荡","高新文教区"],"publisherId":2946659,"loginTime":1462891920000,"appShow":940,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":66,"adWord":0,"formatCreateTime":"2天前发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 09:38:43","companyId":19875,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"维沃移动通信有限公司","city":"南京","salary":"12k-24k","financeStage":"初创型(未融资)","positionId":938099,"companyLogo":"image1/M00/00/25/Cgo8PFTUWH-Ab57wAABKOdLbNuw116.png","positionFirstType":"技术","companyName":"vivo","positionAdvantage":"vivo,追求极致","industryField":"移动互联网","score":1320,"district":"建邺区","companyLabelList":["年终分红","五险一金","带薪年假","年度旅游"],"deliverCount":4,"leaderName":"暂没有填写","companySize":"2000人以上","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462844323000,"positonTypesMap":null,"hrScore":57,"flowScore":149,"showCount":981,"pvScore":72.14107985481958,"plus":"是","businessZones":["沙洲","小行","赛虹桥"],"publisherId":302876,"loginTime":1462871424000,"appShow":353,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":66,"adWord":0,"formatCreateTime":"09:38发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 09:49:59","companyId":20473,"positionName":"安卓开发工程师","positionType":"移动开发","workYear":"3-5年","education":"大专","jobNature":"全职","companyShortName":"广州棒谷网络科技有限公司","city":"广州","salary":"8k-15k","financeStage":"成长型(A轮)","positionId":1733545,"companyLogo":"image1/M00/0F/36/Cgo8PFT9AgGAciySAAA1THfEIAE433.jpg","positionFirstType":"技术","companyName":"广州棒谷网络科技有限公司","positionAdvantage":"五险一金 大平台 带薪休假","industryField":"电子商务","score":1320,"district":null,"companyLabelList":["项目奖金","绩效奖金","年终奖","五险一金"],"deliverCount":15,"leaderName":"大邹","companySize":"500-2000人","randomScore":0,"countAdjusted":false,"relScore":980,"adjustScore":48,"imstate":"today","createTimeSort":1462844999000,"positonTypesMap":null,"hrScore":79,"flowScore":144,"showCount":3943,"pvScore":77.78928844199473,"plus":"是","businessZones":null,"publisherId":235413,"loginTime":1462878251000,"appShow":1562,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"09:49发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-04 11:41:59","companyId":87117,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"本科","jobNature":"全职","companyShortName":"南京信通科技有限责任公司","city":"南京","salary":"10k-12k","financeStage":"成长型(不需要融资)","positionId":966059,"companyLogo":"image1/M00/3F/51/CgYXBlXASfuADyOsAAA_na14zho635.jpg?cc=0.6724986131303012","positionFirstType":"技术","companyName":"联创集团信通科技","positionAdvantage":"提供完善的福利和薪酬晋升制度","industryField":"移动互联网 · 教育","score":1320,"district":"鼓楼区","companyLabelList":["节日礼物","带薪年假","补充医保","补充子女医保"],"deliverCount":4,"leaderName":"暂没有填写","companySize":"150-500人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462333319000,"positonTypesMap":null,"hrScore":88,"flowScore":143,"showCount":3161,"pvScore":79.41660570401937,"plus":"是","businessZones":["虎踞路","龙江","西桥"],"publisherId":2230973,"loginTime":1462871511000,"appShow":711,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":41,"adWord":0,"formatCreateTime":"2016-05-04","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 09:26:58","companyId":103051,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"大专","jobNature":"全职","companyShortName":"浙江米果网络股份有限公司","city":"杭州","salary":"12k-22k","financeStage":"成长型(A轮)","positionId":1233873,"companyLogo":"image2/M00/10/DF/CgqLKVYwKQSAR2p4AAJAM590SJM137.png?cc=0.17502541467547417","positionFirstType":"技术","companyName":"米果小站","positionAdvantage":"充分的发展成长空间","industryField":"移动互联网","score":1320,"district":"滨江区","companyLabelList":["年底双薪","股票期权","午餐补助","五险一金"],"deliverCount":5,"leaderName":"暂没有填写","companySize":"150-500人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"disabled","createTimeSort":1462843618000,"positonTypesMap":null,"hrScore":72,"flowScore":137,"showCount":1582,"pvScore":87.93158026917071,"plus":"是","businessZones":["江南","长河","西兴"],"publisherId":2992735,"loginTime":1462889479000,"appShow":312,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":63,"adWord":0,"formatCreateTime":"09:26发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 10:01:39","companyId":70044,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"大专","jobNature":"全职","companyShortName":"武汉平行世界网络科技有限公司","city":"武汉","salary":"9k-13k","financeStage":"初创型(天使轮)","positionId":664813,"companyLogo":"image2/M00/00/3E/CgqLKVXdccSAQE91AACv-6V33Vo860.jpg","positionFirstType":"技术","companyName":"平行世界","positionAdvantage":"弹性工作、带薪年假、待遇优厚、3号线直达","industryField":"电子商务 · 文化娱乐","score":1320,"district":"蔡甸区","companyLabelList":["年底双薪","待遇优厚","专项奖金","带薪年假"],"deliverCount":18,"leaderName":"暂没有填写","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462845699000,"positonTypesMap":null,"hrScore":76,"flowScore":128,"showCount":1934,"pvScore":99.6001810598155,"plus":"是","businessZones":["沌口"],"publisherId":1694134,"loginTime":1462892151000,"appShow":759,"calcScore":false,"showOrder":1433141412915,"haveDeliver":false,"orderBy":68,"adWord":0,"formatCreateTime":"10:01发布","totalCount":0,"searchScore":0.0}]}}}

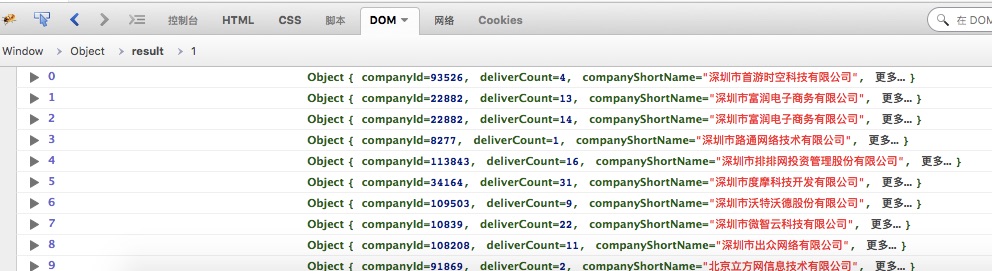

在XHR中点击JSON,就可以看到浏览器返回来的数据了。 是不是跟上面使用python程序抓取的一样呢?

是不是很简单?

如果想获得第2页,第3页的数据呢?

只需要修改pn=x 中的值就可以了。post_data = {'first':'true','kd':'Android','pn':'2'} #获取第2页的内容

如果想要获取全部内容,写一个循环语句就可以了。

版权所有,转载请说明出处:www.30daydo.com

查看全部

使用过chrome,firefox,wireshark来抓过包,比较方便的是chrome,不需要安装第三方的其它插件,不过打开新页面的时候又要重新开一个捕捉页面,会错过一些实时的数据。

wireshark需要专门掌握它自己的过滤规则,学习成本摆在那里。

最好用的还是firefox+firebug第三方插件。

接下来以拉勾网为例。

打开firebug功能

www.lagou.com 在左侧栏随便点击一个岗位,以android为例

在firebug中,需要点击“网络”选项卡,然后选择XHR。

Post的信息就是我们需要关注的,点击post的链接

点击了Android之后 我们从浏览器上传了几个参数到拉勾的服务器

一个是 first =true, 一个是kd = android, (关键字) 一个是pn =1 (page number 页码)

所以我们就可以模仿这一个步骤来构造一个数据包来模拟用户的点击动作。post_data = {'first':'true','kd':'Android','pn':'1'}

然后使用python中库中最简单的requests库来提交数据。 而这些数据 正是抓包里看到的数据。import requests

url = "http://www.lagou.com/jobs/posi ... ot%3B

return_data=requests.post(url,data=post_data)

print return_data.text

呐,打印出来的数据就是返回来的json数据{"code":0,"success":true,"requestId":null,"resubmitToken":null,"msg":null,"content":{"pageNo":1,"pageSize":15,"positionResult":{"totalCount":5000,"pageSize":15,"locationInfo":{"city":null,"district":null,"businessZone":null},"result":[{"createTime":"2016-05-05 17:27:50","companyId":50889,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"和创(北京)科技股份有限公司","city":"北京","salary":"20k-35k","financeStage":"上市公司","positionId":1455217,"companyLogo":"i/image/M00/03/44/Cgp3O1ax7JWAOSzUAABS3OF0A7w289.jpg","positionFirstType":"技术","companyName":"和创科技(红圈营销)","positionAdvantage":"上市公司,持续股权激励政策,技术极客云集","industryField":"移动互联网 · 企业服务","score":1372,"district":"西城区","companyLabelList":["弹性工作","敏捷研发","股票期权","年底双薪"],"deliverCount":13,"leaderName":"刘学臣","companySize":"2000人以上","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462440470000,"positonTypesMap":null,"hrScore":77,"flowScore":148,"showCount":6627,"pvScore":73.2258060280967,"plus":"是","businessZones":["新街口","德胜门","小西天"],"publisherId":994817,"loginTime":1462876049000,"appShow":3141,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":99,"adWord":1,"formatCreateTime":"2016-05-05","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-05 18:30:16","companyId":50889,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"和创(北京)科技股份有限公司","city":"北京","salary":"20k-35k","financeStage":"上市公司","positionId":1440576,"companyLogo":"i/image/M00/03/44/Cgp3O1ax7JWAOSzUAABS3OF0A7w289.jpg","positionFirstType":"技术","companyName":"和创科技(红圈营销)","positionAdvantage":"上市公司,持续股权激励政策,技术爆棚!","industryField":"移动互联网 · 企业服务","score":1372,"district":"海淀区","companyLabelList":["弹性工作","敏捷研发","股票期权","年底双薪"],"deliverCount":6,"leaderName":"刘学臣","companySize":"2000人以上","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462444216000,"positonTypesMap":null,"hrScore":77,"flowScore":148,"showCount":3214,"pvScore":73.37271526202157,"plus":"是","businessZones":["双榆树","中关村","大钟寺"],"publisherId":994817,"loginTime":1462876049000,"appShow":1782,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":99,"adWord":1,"formatCreateTime":"2016-05-05","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 18:41:29","companyId":94307,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"宁波海大物联科技有限公司","city":"宁波","salary":"8k-15k","financeStage":"成长型(A轮)","positionId":1070249,"companyLogo":"image2/M00/03/32/CgqLKVXtWiuAUbXgAAB1g_5FW3Y484.png?cc=0.6152940313331783","positionFirstType":"技术","companyName":"海大物联","positionAdvantage":"一流的技术团队,丰厚的薪资回报。","industryField":"移动互联网 · 企业服务","score":1353,"district":"鄞州区","companyLabelList":["节日礼物","年底双薪","带薪年假","年度旅游"],"deliverCount":0,"leaderName":"暂没有填写","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462876889000,"positonTypesMap":null,"hrScore":75,"flowScore":167,"showCount":1031,"pvScore":47.6349840620252,"plus":"是","businessZones":null,"publisherId":2494230,"loginTime":1462885305000,"appShow":184,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":63,"adWord":0,"formatCreateTime":"18:41发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 17:57:43","companyId":89004,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"学历不限","jobNature":"全职","companyShortName":"温州康宁医院股份有限公司","city":"杭州","salary":"10k-20k","financeStage":"上市公司","positionId":1387825,"companyLogo":"i/image/M00/02/C2/CgqKkVabp--APWTjAACHHJJxyPc207.png","positionFirstType":"技术","companyName":"的的心理","positionAdvantage":"上市公司内部创业项目。优质福利待遇+期权","industryField":"移动互联网 · 医疗健康","score":1344,"district":"江干区","companyLabelList":["年底双薪","股票期权","带薪年假","招募合伙人"],"deliverCount":5,"leaderName":"杨怡","companySize":"500-2000人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462874263000,"positonTypesMap":null,"hrScore":74,"flowScore":153,"showCount":1312,"pvScore":66.87818057124453,"plus":"是","businessZones":["四季青","景芳"],"publisherId":3655492,"loginTime":1462873104000,"appShow":573,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"17:57发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 13:49:47","companyId":15071,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"杭州短趣网络传媒技术有限公司","city":"杭州","salary":"10k-20k","financeStage":"成长型(A轮)","positionId":1803257,"companyLogo":"image2/M00/0B/80/CgpzWlYYse2AJgc0AABG9iSEWAE052.jpg","positionFirstType":"技术","companyName":"短趣网","positionAdvantage":"高额项目奖金,行业内有竞争力的薪资水平","industryField":"移动互联网 · 社交网络","score":1343,"district":null,"companyLabelList":["绩效奖金","年终分红","五险一金","通讯津贴"],"deliverCount":1,"leaderName":"王强宇","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":28,"imstate":"today","createTimeSort":1462859387000,"positonTypesMap":null,"hrScore":68,"flowScore":178,"showCount":652,"pvScore":32.82081357576065,"plus":"否","businessZones":null,"publisherId":4362468,"loginTime":1462870318000,"appShow":0,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"13:49发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 13:55:08","companyId":28422,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"成都品果科技有限公司","city":"北京","salary":"18k-30k","financeStage":"成长型(B轮)","positionId":290875,"companyLogo":"i/image/M00/02/F3/Cgp3O1ah7FuAbSnkAACMlcPiWXk393.png","positionFirstType":"技术","companyName":"camera360","positionAdvantage":"高大上的福利待遇、发展前景等着你哦!","industryField":"移动互联网","score":1339,"district":"海淀区","companyLabelList":["年终分红","绩效奖金","年底双薪","五险一金"],"deliverCount":6,"leaderName":"徐灏","companySize":"15-50人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":0,"imstate":"disabled","createTimeSort":1462859708000,"positonTypesMap":null,"hrScore":80,"flowScore":188,"showCount":1199,"pvScore":19.745453118211834,"plus":"是","businessZones":["中关村","北京大学","苏州街"],"publisherId":389753,"loginTime":1462866640000,"appShow":0,"calcScore":false,"showOrder":1433137697136,"haveDeliver":false,"orderBy":71,"adWord":0,"formatCreateTime":"13:55发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 17:57:55","companyId":89004,"positionName":"Android","positionType":"移动开发","workYear":"不限","education":"学历不限","jobNature":"全职","companyShortName":"温州康宁医院股份有限公司","city":"杭州","salary":"10k-20k","financeStage":"上市公司","positionId":1410975,"companyLogo":"i/image/M00/02/C2/CgqKkVabp--APWTjAACHHJJxyPc207.png","positionFirstType":"技术","companyName":"的的心理","positionAdvantage":"上市公司内部创业团队","industryField":"移动互联网 · 医疗健康","score":1335,"district":null,"companyLabelList":["年底双薪","股票期权","带薪年假","招募合伙人"],"deliverCount":9,"leaderName":"杨怡","companySize":"500-2000人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462874275000,"positonTypesMap":null,"hrScore":74,"flowScore":144,"showCount":2085,"pvScore":77.9570832081189,"plus":"是","businessZones":null,"publisherId":3655492,"loginTime":1462873104000,"appShow":711,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"17:57发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-09 09:46:32","companyId":113895,"positionName":"Android开发工程师","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"北京互动金服科技有限公司","city":"北京","salary":"15k-25k","financeStage":"成长型(不需要融资)","positionId":1473342,"companyLogo":"i/image/M00/03/D8/CgqKkVbEA_uAe1k4AAHTfy3RxPY812.jpg","positionFirstType":"技术","companyName":"互动科技","positionAdvantage":"五险一金 补充医疗 年终奖 福利津贴","industryField":"移动互联网 · O2O","score":1326,"district":"海淀区","companyLabelList":,"deliverCount":32,"leaderName":"暂没有填写","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":980,"adjustScore":48,"imstate":"today","createTimeSort":1462758392000,"positonTypesMap":null,"hrScore":82,"flowScore":153,"showCount":3741,"pvScore":67.01698353391613,"plus":"是","businessZones":["白石桥","魏公村","万寿寺","白石桥","魏公村","万寿寺"],"publisherId":3814477,"loginTime":1462874842000,"appShow":0,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":63,"adWord":0,"formatCreateTime":"1天前发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 16:50:51","companyId":23999,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"本科","jobNature":"全职","companyShortName":"南京智鹤电子科技有限公司","city":"长沙","salary":"8k-12k","financeStage":"成长型(A轮)","positionId":1804917,"companyLogo":"image1/M00/35/EB/CgYXBlWc5KGAVeL8AAAOi4lPhWU502.jpg","positionFirstType":"技术","companyName":"智鹤科技","positionAdvantage":"弹性工作制 技术氛围浓厚","industryField":"移动互联网","score":1322,"district":null,"companyLabelList":["股票期权","绩效奖金","专项奖金","年终分红"],"deliverCount":1,"leaderName":"暂没有填写","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":0,"imstate":"disabled","createTimeSort":1462870251000,"positonTypesMap":null,"hrScore":62,"flowScore":191,"showCount":283,"pvScore":15.939035855045429,"plus":"否","businessZones":null,"publisherId":282621,"loginTime":1462869967000,"appShow":0,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"16:50发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-08 23:12:56","companyId":24287,"positionName":"android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"杭州腾展科技有限公司","city":"杭州","salary":"15k-22k","financeStage":"成熟型(D轮及以上)","positionId":1197868,"companyLogo":"image1/M00/0B/7D/Cgo8PFTzIBOAEd2dAACMq9tQoMA797.png","positionFirstType":"技术","companyName":"腾展叮咚(Dingtone)","positionAdvantage":"每半年调整薪资,今年上市!","industryField":"移动互联网 · 社交网络","score":1322,"district":"西湖区","companyLabelList":["出国旅游","股票期权","精英团队","强悍的创始人"],"deliverCount":9,"leaderName":"魏松祥(Steve Wei)","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"disabled","createTimeSort":1462720376000,"positonTypesMap":null,"hrScore":71,"flowScore":137,"showCount":3786,"pvScore":87.51582865460942,"plus":"是","businessZones":["文三路","古荡","高新文教区"],"publisherId":2946659,"loginTime":1462891920000,"appShow":940,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":66,"adWord":0,"formatCreateTime":"2天前发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 09:38:43","companyId":19875,"positionName":"Android","positionType":"移动开发","workYear":"3-5年","education":"本科","jobNature":"全职","companyShortName":"维沃移动通信有限公司","city":"南京","salary":"12k-24k","financeStage":"初创型(未融资)","positionId":938099,"companyLogo":"image1/M00/00/25/Cgo8PFTUWH-Ab57wAABKOdLbNuw116.png","positionFirstType":"技术","companyName":"vivo","positionAdvantage":"vivo,追求极致","industryField":"移动互联网","score":1320,"district":"建邺区","companyLabelList":["年终分红","五险一金","带薪年假","年度旅游"],"deliverCount":4,"leaderName":"暂没有填写","companySize":"2000人以上","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462844323000,"positonTypesMap":null,"hrScore":57,"flowScore":149,"showCount":981,"pvScore":72.14107985481958,"plus":"是","businessZones":["沙洲","小行","赛虹桥"],"publisherId":302876,"loginTime":1462871424000,"appShow":353,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":66,"adWord":0,"formatCreateTime":"09:38发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 09:49:59","companyId":20473,"positionName":"安卓开发工程师","positionType":"移动开发","workYear":"3-5年","education":"大专","jobNature":"全职","companyShortName":"广州棒谷网络科技有限公司","city":"广州","salary":"8k-15k","financeStage":"成长型(A轮)","positionId":1733545,"companyLogo":"image1/M00/0F/36/Cgo8PFT9AgGAciySAAA1THfEIAE433.jpg","positionFirstType":"技术","companyName":"广州棒谷网络科技有限公司","positionAdvantage":"五险一金 大平台 带薪休假","industryField":"电子商务","score":1320,"district":null,"companyLabelList":["项目奖金","绩效奖金","年终奖","五险一金"],"deliverCount":15,"leaderName":"大邹","companySize":"500-2000人","randomScore":0,"countAdjusted":false,"relScore":980,"adjustScore":48,"imstate":"today","createTimeSort":1462844999000,"positonTypesMap":null,"hrScore":79,"flowScore":144,"showCount":3943,"pvScore":77.78928844199473,"plus":"是","businessZones":null,"publisherId":235413,"loginTime":1462878251000,"appShow":1562,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":69,"adWord":0,"formatCreateTime":"09:49发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-04 11:41:59","companyId":87117,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"本科","jobNature":"全职","companyShortName":"南京信通科技有限责任公司","city":"南京","salary":"10k-12k","financeStage":"成长型(不需要融资)","positionId":966059,"companyLogo":"image1/M00/3F/51/CgYXBlXASfuADyOsAAA_na14zho635.jpg?cc=0.6724986131303012","positionFirstType":"技术","companyName":"联创集团信通科技","positionAdvantage":"提供完善的福利和薪酬晋升制度","industryField":"移动互联网 · 教育","score":1320,"district":"鼓楼区","companyLabelList":["节日礼物","带薪年假","补充医保","补充子女医保"],"deliverCount":4,"leaderName":"暂没有填写","companySize":"150-500人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462333319000,"positonTypesMap":null,"hrScore":88,"flowScore":143,"showCount":3161,"pvScore":79.41660570401937,"plus":"是","businessZones":["虎踞路","龙江","西桥"],"publisherId":2230973,"loginTime":1462871511000,"appShow":711,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":41,"adWord":0,"formatCreateTime":"2016-05-04","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 09:26:58","companyId":103051,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"大专","jobNature":"全职","companyShortName":"浙江米果网络股份有限公司","city":"杭州","salary":"12k-22k","financeStage":"成长型(A轮)","positionId":1233873,"companyLogo":"image2/M00/10/DF/CgqLKVYwKQSAR2p4AAJAM590SJM137.png?cc=0.17502541467547417","positionFirstType":"技术","companyName":"米果小站","positionAdvantage":"充分的发展成长空间","industryField":"移动互联网","score":1320,"district":"滨江区","companyLabelList":["年底双薪","股票期权","午餐补助","五险一金"],"deliverCount":5,"leaderName":"暂没有填写","companySize":"150-500人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"disabled","createTimeSort":1462843618000,"positonTypesMap":null,"hrScore":72,"flowScore":137,"showCount":1582,"pvScore":87.93158026917071,"plus":"是","businessZones":["江南","长河","西兴"],"publisherId":2992735,"loginTime":1462889479000,"appShow":312,"calcScore":false,"showOrder":0,"haveDeliver":false,"orderBy":63,"adWord":0,"formatCreateTime":"09:26发布","totalCount":0,"searchScore":0.0},{"createTime":"2016-05-10 10:01:39","companyId":70044,"positionName":"Android","positionType":"移动开发","workYear":"1-3年","education":"大专","jobNature":"全职","companyShortName":"武汉平行世界网络科技有限公司","city":"武汉","salary":"9k-13k","financeStage":"初创型(天使轮)","positionId":664813,"companyLogo":"image2/M00/00/3E/CgqLKVXdccSAQE91AACv-6V33Vo860.jpg","positionFirstType":"技术","companyName":"平行世界","positionAdvantage":"弹性工作、带薪年假、待遇优厚、3号线直达","industryField":"电子商务 · 文化娱乐","score":1320,"district":"蔡甸区","companyLabelList":["年底双薪","待遇优厚","专项奖金","带薪年假"],"deliverCount":18,"leaderName":"暂没有填写","companySize":"50-150人","randomScore":0,"countAdjusted":false,"relScore":1000,"adjustScore":48,"imstate":"today","createTimeSort":1462845699000,"positonTypesMap":null,"hrScore":76,"flowScore":128,"showCount":1934,"pvScore":99.6001810598155,"plus":"是","businessZones":["沌口"],"publisherId":1694134,"loginTime":1462892151000,"appShow":759,"calcScore":false,"showOrder":1433141412915,"haveDeliver":false,"orderBy":68,"adWord":0,"formatCreateTime":"10:01发布","totalCount":0,"searchScore":0.0}]}}}

在XHR中点击JSON,就可以看到浏览器返回来的数据了。 是不是跟上面使用python程序抓取的一样呢?

是不是很简单?

如果想获得第2页,第3页的数据呢?

只需要修改pn=x 中的值就可以了。post_data = {'first':'true','kd':'Android','pn':'2'} #获取第2页的内容

如果想要获取全部内容,写一个循环语句就可以了。

版权所有,转载请说明出处:www.30daydo.com

查看全部

针对一些JS网页,动态网页在源码中无法看到它的内容,可以通过抓包分析出其JSON格式的数据。 网页通过通过这些JSON数据对网页的内容进行填充,然后就看到网页里显示相关的内容了。

使用过chrome,firefox,wireshark来抓过包,比较方便的是chrome,不需要安装第三方的其它插件,不过打开新页面的时候又要重新开一个捕捉页面,会错过一些实时的数据。

wireshark需要专门掌握它自己的过滤规则,学习成本摆在那里。

最好用的还是firefox+firebug第三方插件。

接下来以拉勾网为例。

打开firebug功能

www.lagou.com 在左侧栏随便点击一个岗位,以android为例

在firebug中,需要点击“网络”选项卡,然后选择XHR。

Post的信息就是我们需要关注的,点击post的链接

点击了Android之后 我们从浏览器上传了几个参数到拉勾的服务器

一个是 first =true, 一个是kd = android, (关键字) 一个是pn =1 (page number 页码)

所以我们就可以模仿这一个步骤来构造一个数据包来模拟用户的点击动作。

然后使用python中库中最简单的requests库来提交数据。 而这些数据 正是抓包里看到的数据。

呐,打印出来的数据就是返回来的json数据

在XHR中点击JSON,就可以看到浏览器返回来的数据了。 是不是跟上面使用python程序抓取的一样呢?

是不是很简单?

如果想获得第2页,第3页的数据呢?

只需要修改pn=x 中的值就可以了。

如果想要获取全部内容,写一个循环语句就可以了。

版权所有,转载请说明出处:www.30daydo.com

使用过chrome,firefox,wireshark来抓过包,比较方便的是chrome,不需要安装第三方的其它插件,不过打开新页面的时候又要重新开一个捕捉页面,会错过一些实时的数据。

wireshark需要专门掌握它自己的过滤规则,学习成本摆在那里。

最好用的还是firefox+firebug第三方插件。

接下来以拉勾网为例。

打开firebug功能

www.lagou.com 在左侧栏随便点击一个岗位,以android为例

在firebug中,需要点击“网络”选项卡,然后选择XHR。

Post的信息就是我们需要关注的,点击post的链接

点击了Android之后 我们从浏览器上传了几个参数到拉勾的服务器

一个是 first =true, 一个是kd = android, (关键字) 一个是pn =1 (page number 页码)

所以我们就可以模仿这一个步骤来构造一个数据包来模拟用户的点击动作。

post_data = {'first':'true','kd':'Android','pn':'1'}然后使用python中库中最简单的requests库来提交数据。 而这些数据 正是抓包里看到的数据。

import requests

url = "http://www.lagou.com/jobs/posi ... ot%3B

return_data=requests.post(url,data=post_data)

print return_data.text

呐,打印出来的数据就是返回来的json数据